Publication-Ready Academic Research Manuscript

March 2026

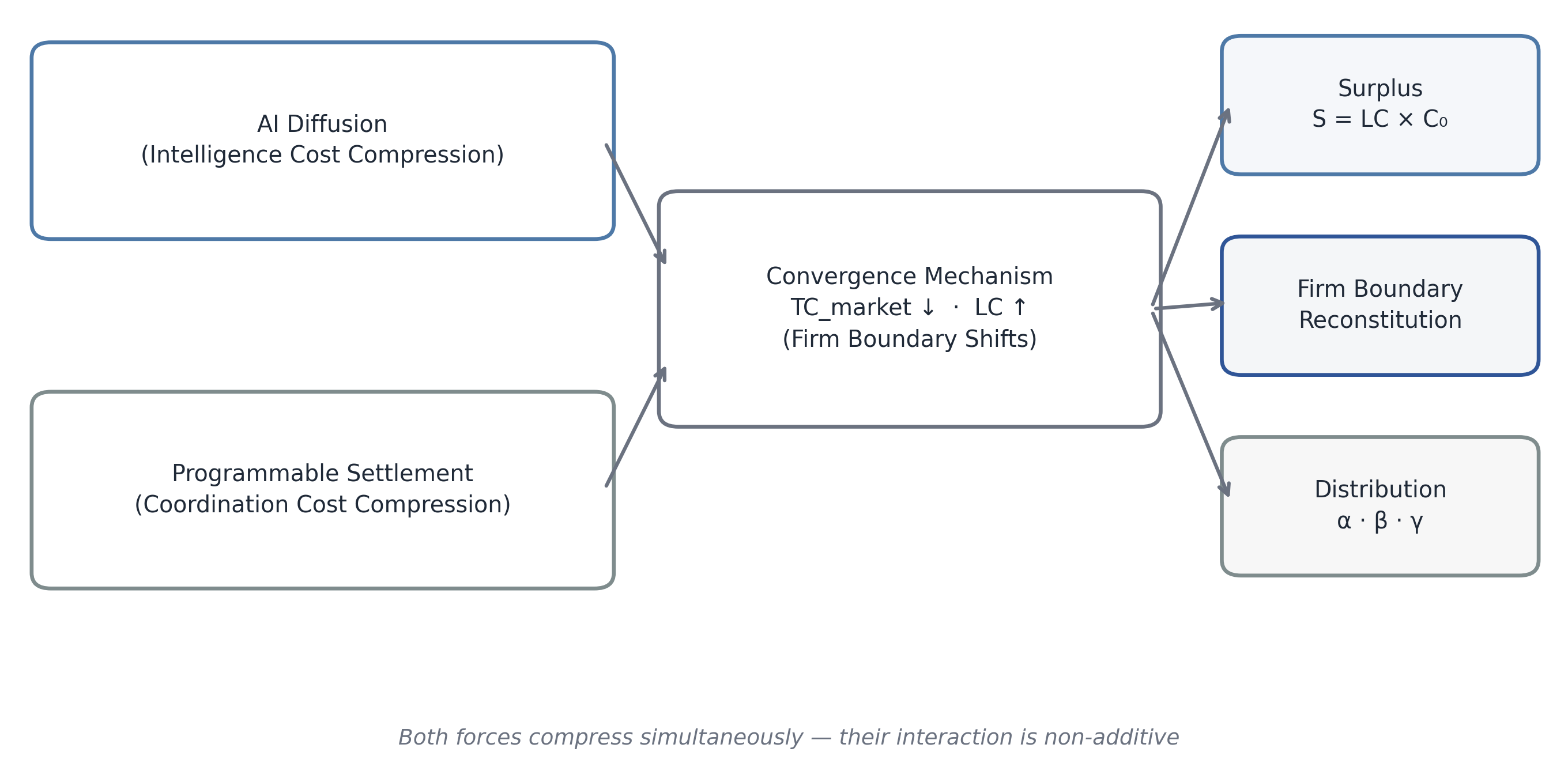

This manuscript develops an integrated institutional framework for a structural economic transition driven by the simultaneous compression of intelligence costs and coordination costs. The first force is AI-enabled labor compression in knowledge-intensive tasks; the second is programmable settlement infrastructure that reduces search, contracting, enforcement, settlement latency, and reconciliation overhead. Building on transaction-cost economics and macro distribution theory, the paper defines an aggregate surplus identity S = LC × C0 and models surplus allocation across three channels: corporate profit (α), micro-enterprise income (β), and infrastructure rent (γ), with α + β + γ = 1. The central claim is that the distribution of this surplus, not productivity growth alone, is the decisive macro variable for the decade ahead. The manuscript presents microeconomic parameterization, general-equilibrium labor-share dynamics, sectoral implications, governance redesign, scenario architecture for 2026-2036, portfolio allocation implications, and policy measurement reforms. The analysis indicates a likely hybrid equilibrium in which incumbents retain significant strategic roles while firm boundaries become more modular and infrastructure concentration rises. Distributional outcomes depend on regulatory design, open-model diffusion, and institutional adaptation velocity.

Eisenberg, Barry. 2026. The Convergence Economy: Intelligence, Settlement, and the Re-Architecture of the Firm. Publication-ready academic manuscript.

March 2026

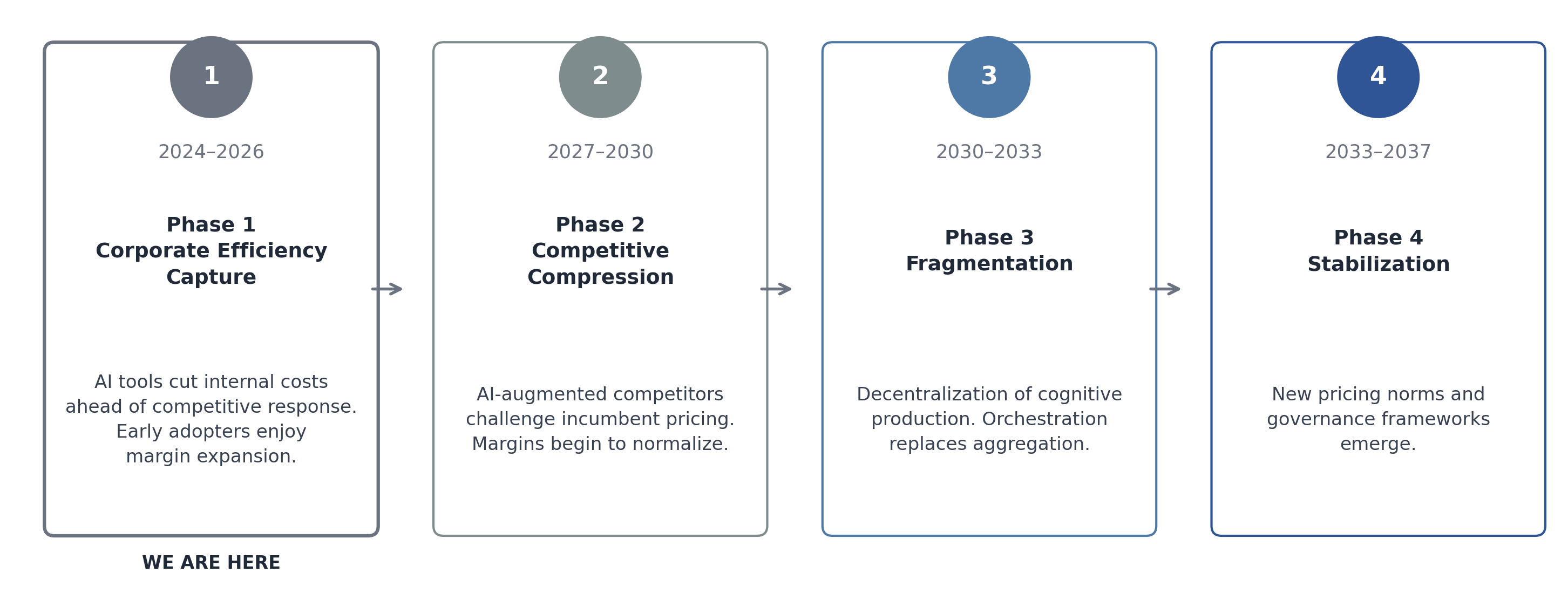

The global economy is entering a structural inflection of a kind that occurs rarely and matters disproportionately. Unlike cyclical contractions or sector-specific disruptions, what is now underway involves the simultaneous and persistent compression of two foundational economic inputs: the cost of generating cognitive output and the cost of coordinating exchange. The first is driven by the diffusion of general-purpose artificial intelligence. The second is driven by programmable settlement infrastructure — smart contracts, tokenized payment rails, and real-time clearing mechanisms — that materially reduces the friction of transacting across institutional, geographic, and temporal boundaries.

Either force, operating in isolation, would represent a meaningful productivity event. AI reducing the labor intensity of knowledge work would, on its own, alter the cost structures of every sector that competes on intellectual capital. Programmable settlement reducing coordination friction would, on its own, restructure supply chains, compress financial intermediation, and expand the feasible scope of distributed production. But these forces are not operating in isolation. They are compressing simultaneously, and their interaction produces effects that are non-additive. The resulting macro-institutional environment — the Convergence Economy — demands an analytical framework calibrated to its specific structural properties, rather than one borrowed from prior periods of technological change.

The central economic consequence of this convergence is the generation of surplus at a scale that is historically unusual. The surplus arises because two major cost inputs — cognitive labor and coordination overhead — are being reduced faster than output prices in competitive markets can adjust. In a frictionless economy, this surplus would immediately dissipate through competition. In the actual economy, institutional rigidities, regulatory lags, first-mover advantages, and infrastructure concentration mean that surplus persists, at least in the medium term, before being competed away.

Defining the Surplus and Its Distribution

Let the baseline compressible labor cost across an enterprise or economic sector be denoted C₀. This is the labor expenditure that is, in principle, substitutable by AI-enabled tooling — chiefly knowledge-work tasks: analysis, synthesis, drafting, coding, classification, and structured judgment. Let the effective labor compression achieved through AI integration be LC, expressed as a fraction of C₀. Then the annual surplus generated by intelligence compression is:

S = LC × C₀

This surplus is not retained automatically by any single constituency. Its distribution is a function of market structure, bargaining power, regulatory regime, and the configuration of ownership over the infrastructure that delivers the compression. The distribution identity can be written:

S = αP + βM + γI

Where: - α (alpha) captures the share of surplus accruing to corporate profit — the incumbent firm capturing margin expansion from reduced headcount or reallocated labor - β (beta) captures the share accruing to micro-enterprise income — distributed operators, freelancers, and independent specialists enabled by lower minimum scale thresholds and cheaper coordination - γ (gamma) captures infrastructure rent — the share flowing to the owners of AI platforms, settlement networks, and the underlying compute and protocol infrastructure

The sum of these coefficients is constrained to unity by construction: α + β + γ = 1. But their individual values are not fixed by technology alone. They are determined by the institutional and political economy that surrounds the technology. A high-α outcome — one in which corporate incumbents capture the dominant share of surplus — produces margin expansion, labor displacement without redistribution, and rising concentration. A high-β outcome — one in which micro-enterprise formations absorb a significant share — produces labor market fragmentation, the proliferation of independent operators, and a structural shift in how work is organized without necessarily reducing aggregate employment. A high-γ outcome — infrastructure rent dominance — concentrates returns among platform and protocol operators, raising questions about competitive access and systemic dependency.

The Decisive Variable of the Decade

The distribution of surplus S is therefore not merely a distributional question in the ethical sense. It is the decisive macro variable for the next decade. The coefficients α, β, and γ determine: the trajectory of labor’s share in national income; the degree of corporate profit concentration and the valuation of incumbent firms; the viability of new enterprise models organized around AI-enabled micro-operators; and the political sustainability of the transition, given that rapid compression of labor income without compensating income streams is historically associated with institutional instability.

This manuscript offers an integrated macro-institutional framework for understanding the Convergence Economy in its current early phase and for deriving strategic guidance appropriate to the conditions it is creating. It is addressed to three audiences: corporate boards and executive leadership teams navigating the re-architecture of the firm; policymakers assessing the distributional and stability implications of rapid structural change; and institutional investors positioning portfolios for a transition that is structural rather than cyclical.

Nine Strategic Imperatives

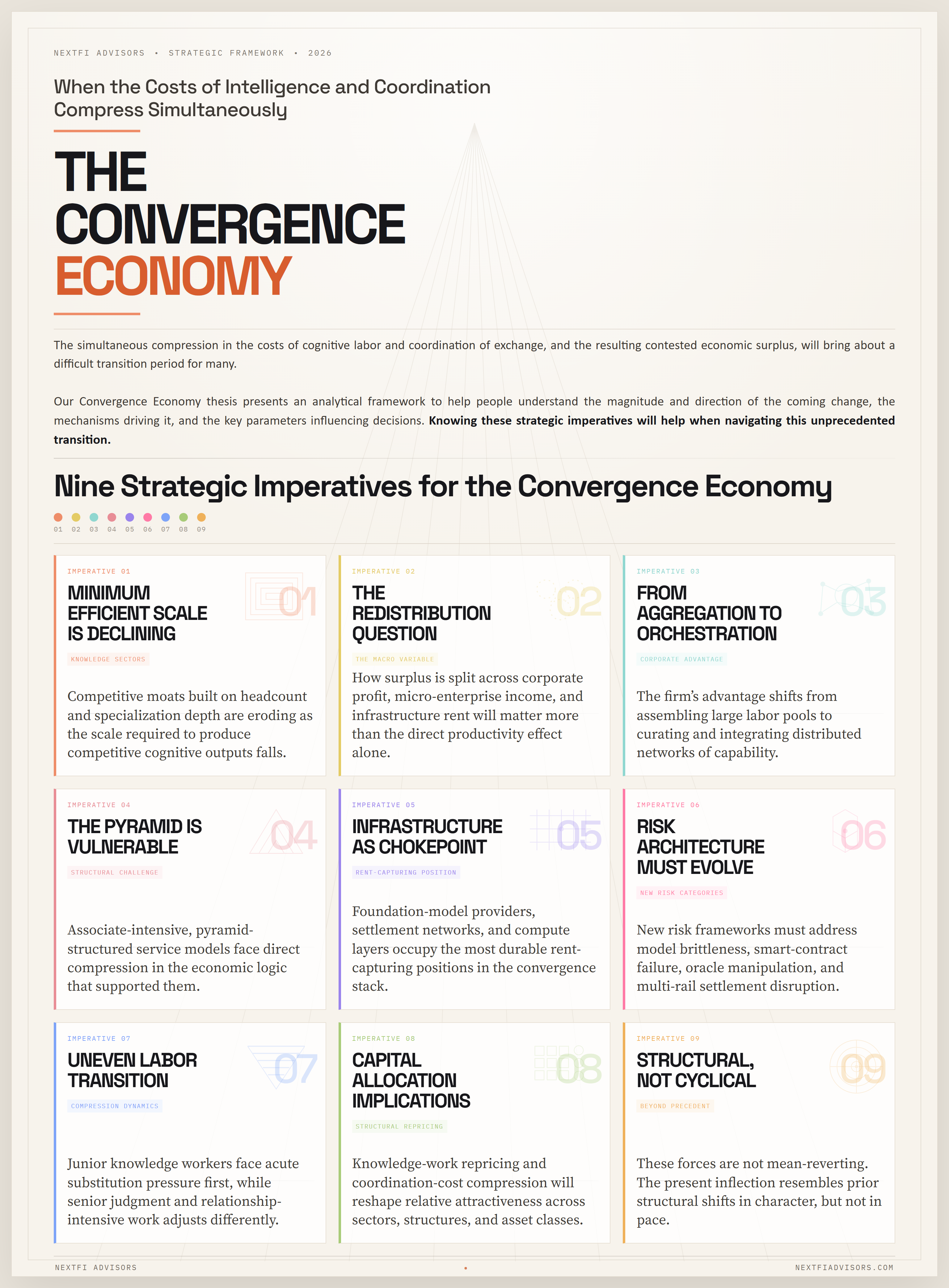

Nine strategic imperatives follow from the Convergence Economy framework and will be developed across the sections that follow:

Minimum efficient scale is declining in knowledge sectors. The scale required to produce competitive cognitive outputs is falling, which means that competitive moats built on headcount and specialization depth are eroding. Firm strategy must be reoriented accordingly.

The redistribution question is the macro variable. The distribution coefficients α, β, and γ will shape aggregate demand, labor market structure, and political economy in ways that dwarf the direct productivity effects of AI diffusion. Ignoring redistribution dynamics produces systematically incomplete forecasts.

Corporate advantage shifts from labor aggregation to orchestration. As the productivity of external operators rises and coordination costs fall, the firm’s comparative advantage migrates from assembling and managing large labor pools to curating, directing, and integrating distributed networks of capability.

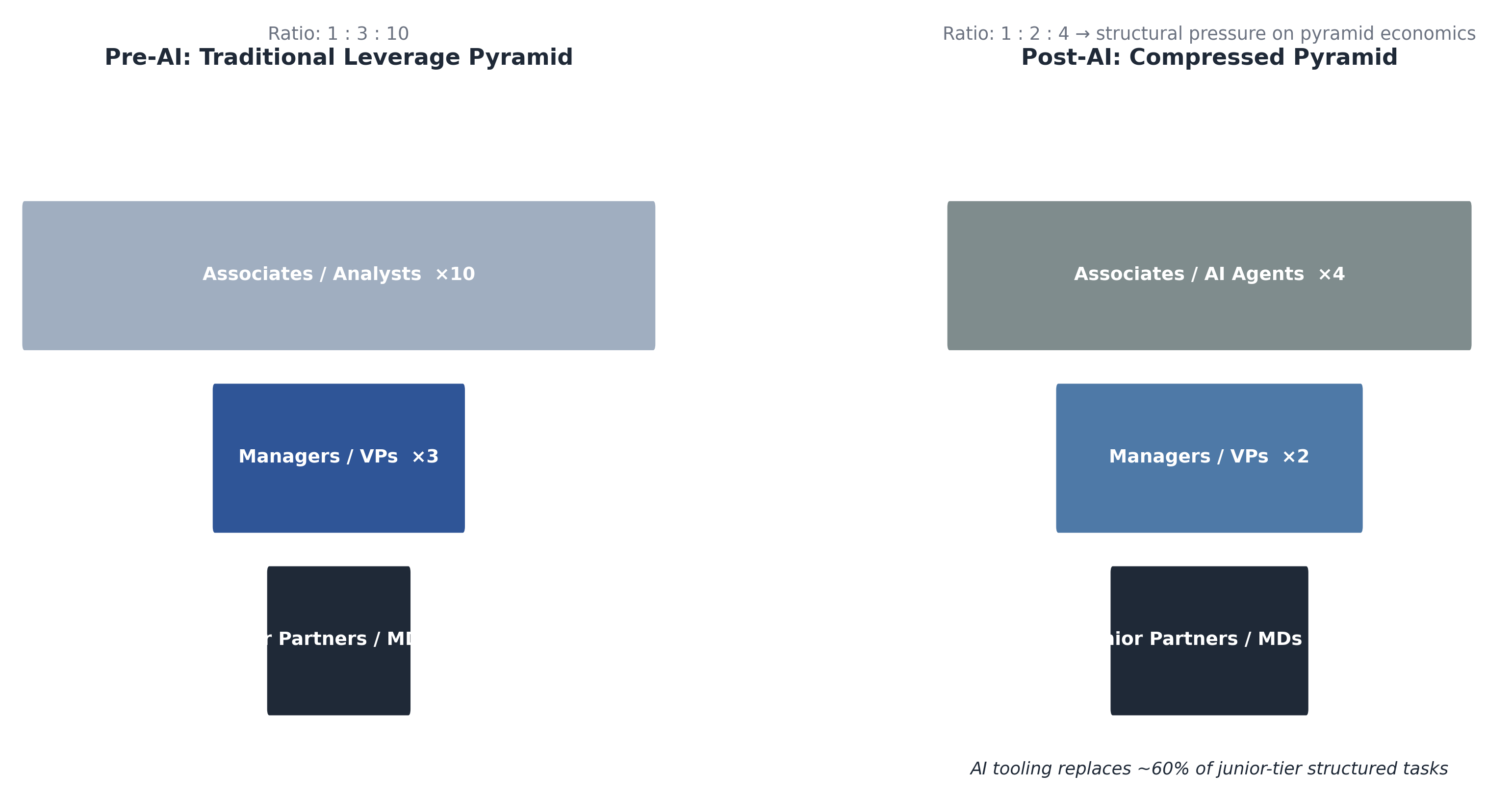

The leverage pyramid model is structurally vulnerable. Professional service models built on the multiplication of junior labor — associate-intensive, pyramid-structured billing — face direct compression in their core value mechanism. This is not a cyclical headcount pressure; it is a structural challenge to the economic logic of the model.

Infrastructure is the strategic chokepoint. As both intelligence and coordination migrate to platform and protocol infrastructure, the owners of that infrastructure — AI foundation model providers, settlement network operators, compute hyperscalers — occupy a γ-capturing position that is likely to be durable and politically contested.

Risk architecture must evolve. The compression of coordination friction creates new categories of operational and systemic risk: smart contract failure, oracle manipulation, AI model brittleness, and multi-rail settlement disruption. Existing risk frameworks are calibrated for a higher-friction environment and require revision.

Labor market transition will be uneven. Compression falls unevenly across task types, geographies, and credential structures. The junior-tier knowledge worker faces acute substitution pressure. Senior judgment and relationship-intensive work faces a different, and potentially more favorable, dynamic. Blanket narratives of displacement overstate and understate simultaneously.

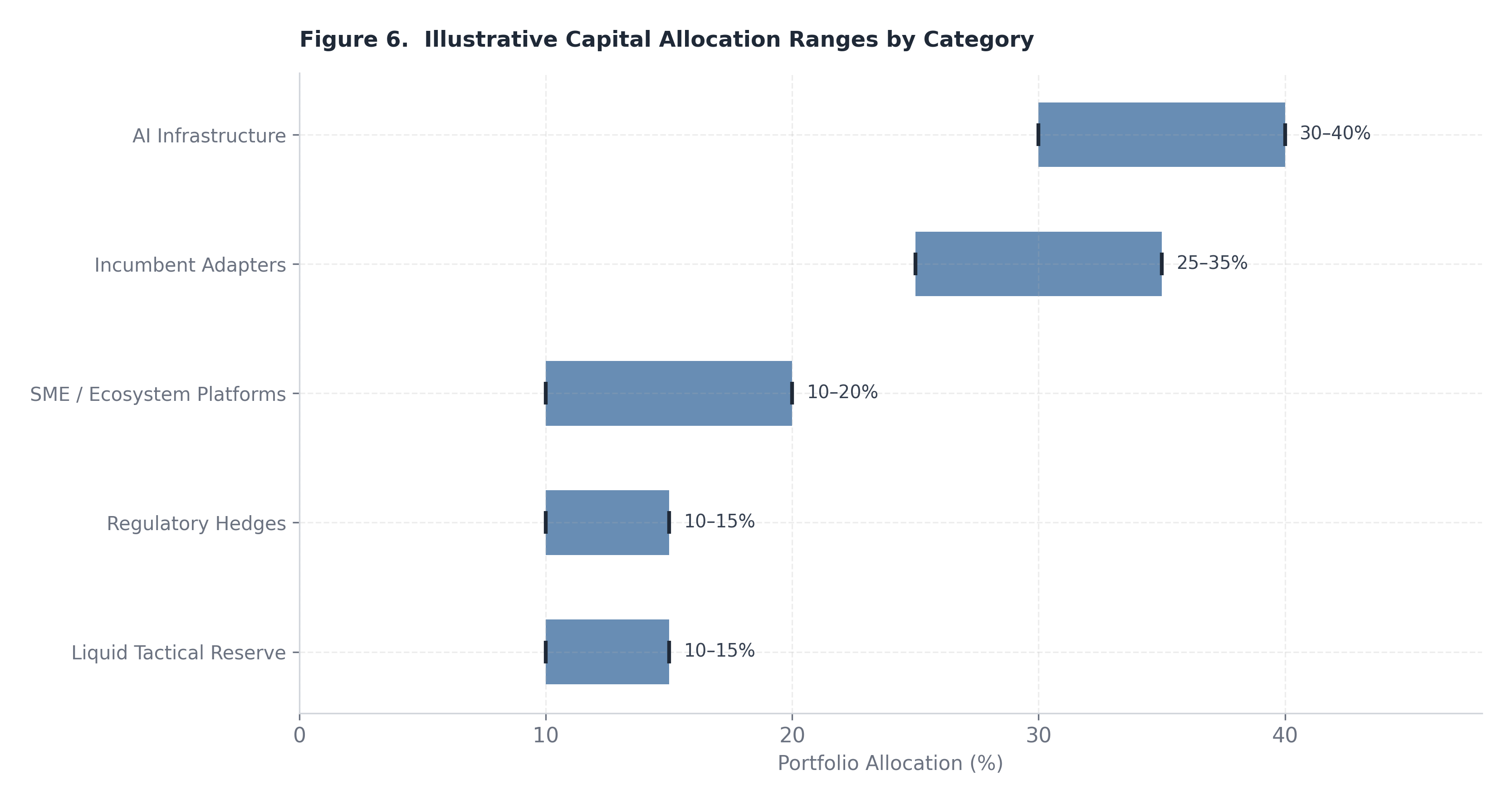

Capital allocation implications are non-trivial. The structural repricing of knowledge-work output, the compression of coordination costs, and the expansion of AI-native enterprise models alter the relative attractiveness of asset classes, sectors, and corporate structures in ways that require revised allocation frameworks.

The decade ahead is structural, not cyclical. The forces at work are not mean-reverting. Prior productivity cycles — electrification, personal computing, internet diffusion — were each followed by multi-decade adjustments in firm structure, labor markets, and capital allocation. The present inflection will be no different in character, even if it is faster in pace.

The sections that follow develop each of these themes analytically. Sections 2 through 4 lay the microeconomic and transaction-cost foundations. Subsequent sections address the implications for firm structure, labor markets, capital allocation, and the policy environment. The framework offered here does not claim predictive certainty; structural transitions of this magnitude resist precise forecasting. What it does claim is that the analytical tools governing strategic planning in the pre-convergence period are insufficient for navigating the one now underway.

Figure 1. Structural logic of concurrent intelligence and coordination compression and downstream institutional effects. The proposition that general-purpose technologies reorganize the structure of production is not novel. Economic historians from Schumpeter through Mokyr to David have demonstrated, with considerable empirical support, that certain technological advances — characterized by their breadth of applicability, their capacity to generate productivity improvements across multiple unrelated sectors, and their tendency to stimulate complementary innovations — produce systemic restructuring rather than incremental adjustment. What is less consistently appreciated is the mechanism through which this restructuring operates: general-purpose technologies generate durable structural change specifically when they alter the relative costs of the fundamental inputs to production in a persistent and material way. It is this input-cost repricing, and not simply the technology itself, that forces the reorganization of the firm.

Three Prior Waves and Their Structural Logic

Mechanization, the first industrial wave, compressed the cost of applying physical force to productive tasks. The immediate consequence was not simply that individual workers became more productive; it was that the minimum scale required to produce competitively in manufacturing fell, then rose, as power equipment became less expensive but also more complex to operate, maintain, and integrate. The firm consolidated because the coordination of mechanized production required spatial proximity and hierarchical management structures that were economically justified by the enormous reduction in per-unit physical labor cost. The factory — a site of aggregated labor under centralized supervision — became the dominant institutional form not because of ideology or convention but because it was the organizational structure best suited to capturing the surplus that mechanization created.

Electrification, the second wave, compressed the cost of deploying energy across a production process. Unlike steam, electricity was divisible, transportable within a facility, and controllable at the point of use. This allowed the reorganization of factory floors, the extension of productive activity into the evening, and eventually the emergence of service industries dependent on continuous power availability. What electrification did not do, in its early phases, was reduce the coordination costs that kept the firm as the primary production unit. The difficulty of contracting across firm boundaries — communicating specifications, verifying quality, enforcing delivery — remained high. The gains from electrification were therefore captured primarily within existing firm structures, which expanded in scale but did not fundamentally reconstitute their organizational logic. The vertically integrated industrial corporation, dominant through much of the twentieth century, was in part a response to high coordination costs that technology had not yet addressed.

The internet wave differed in a crucial respect. It compressed the cost of distributing information and reduced, though did not eliminate, information asymmetries between transacting parties. This made certain categories of market coordination more efficient: price discovery, supplier comparison, buyer verification, and the matching of supply with demand across geographies. E-commerce, platform intermediation, and the proliferation of networked services all reflected this compression of distribution and information cost. Firm boundaries did shift at the margin — outsourcing accelerated, global supply chains deepened, and platform business models emerged that organized production through markets rather than hierarchies. But the core economic logic of the firm remained intact. Coordination of complex, high-value, knowledge-intensive work still required internalization, because the transaction costs of contracting for such work across firm boundaries remained high. The internet lowered TC_market for standardized, verifiable outputs; it did not resolve the contracting problem for complex cognitive tasks.

The Present Moment: Three Structural Distinctions

The current inflection differs from these prior waves in three specific and analytically important respects.

First, the scope of substitution has extended to high-skill cognitive tasks. Mechanization substituted for physical labor. Early computing substituted for clerical and computational routine. The internet substituted for information intermediation. AI, in its current generative and agentic forms, substitutes for — or substantially augments — tasks that previously required advanced judgment, synthesis, drafting, and structured analysis. These are the tasks that formed the productive core of the knowledge economy’s most valuable sectors: professional services, financial analysis, legal research, software engineering, scientific inquiry, strategic advisory. Prior technology waves left these tasks largely untouched, which is why the knowledge economy continued to command premium labor returns even through the internet era. The present wave does not.

Second, the compression of intelligence cost and coordination cost is occurring concurrently, not sequentially. Prior waves repriced single input categories over extended periods. The current inflection is simultaneously repricing both the cost of generating cognitive output and the cost of coordinating exchange. This simultaneity means that the two primary barriers to the modularization of the firm — the high cost of external cognitive labor and the high transaction cost of coordinating it — are falling at the same time. The result is not a marginal shift in firm boundaries but a potential structural discontinuity in the economic logic of why the firm exists at the scale it does.

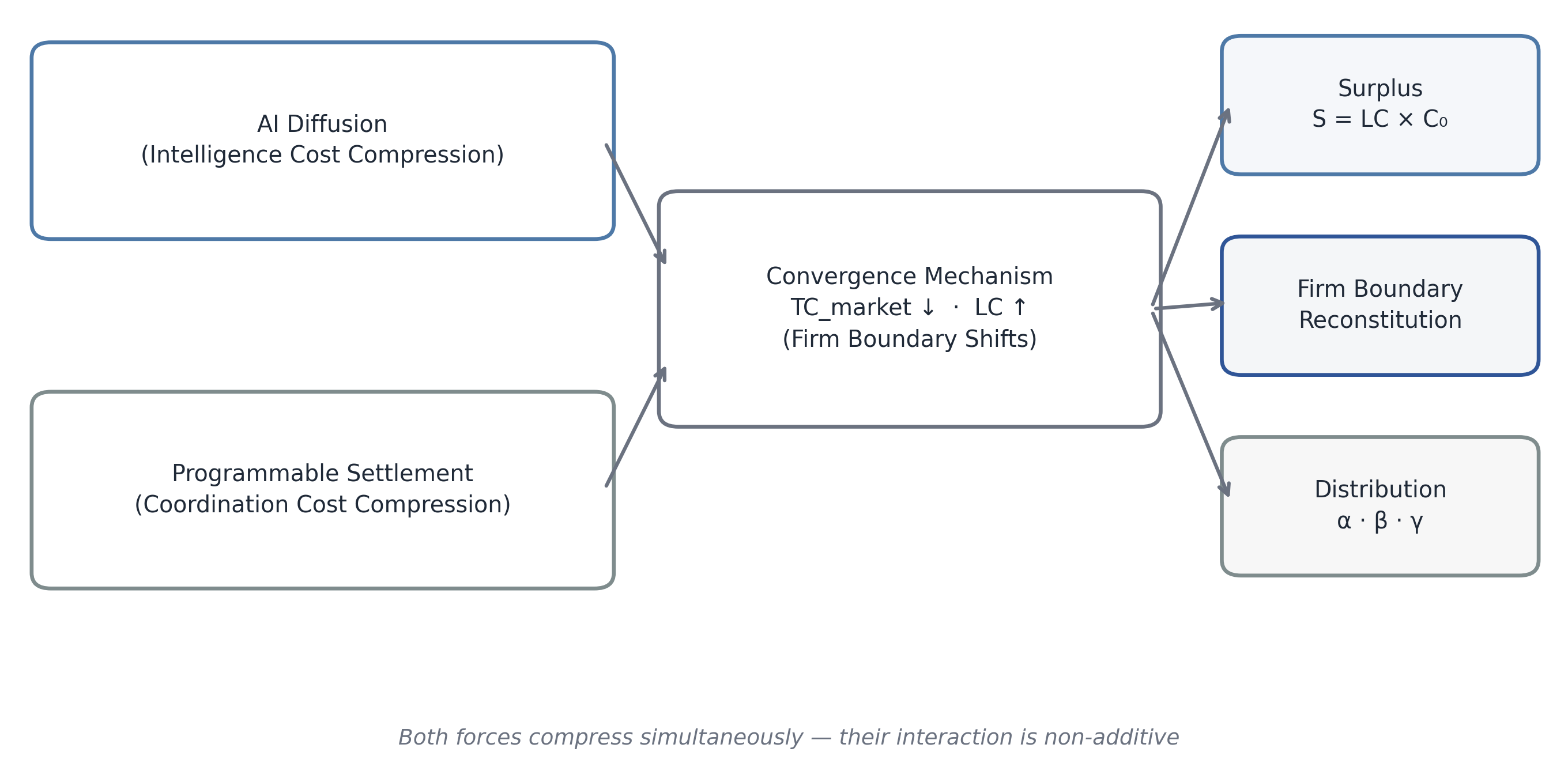

Third, diffusion is occurring in quarters rather than decades. Electrification took roughly four decades to diffuse through U.S. manufacturing. The internet took fifteen years to produce significant organizational change in most industries. The current cycle, measured from the commercial availability of capable large-language models in late 2022 to meaningful enterprise deployment, has proceeded in approximately eighteen months for early adopters and is tracking toward broad-sector integration within three to five years. The speed of diffusion compresses the adjustment period available to incumbents, labor markets, and regulatory institutions.

Transaction-Cost Theory and the Reconstitution of the Firm

The theoretical framework best suited to analyzing these structural implications is the transaction-cost economics of Coase and Williamson. In the Coasian framework, the boundary of the firm is determined by the comparison between the cost of organizing an additional transaction within the firm (TC_internal) and the cost of conducting that transaction through the market (TC_market). Firms internalize activities when TC_market > TC_internal, and externalize — or refrain from internalizing — when the inequality reverses.

Williamson extended this framework to account for asset specificity, bounded rationality, and opportunism as the primary drivers of transaction cost. Activities requiring highly specific assets, complex contracting, and difficult verification are internalized; standardized, verifiable, commoditizable activities are externalized to markets or networks. The post-war corporation’s vertical integration, the professional services firm’s employment of large junior labor pools, and the corporate function’s reluctance to outsource knowledge-intensive work all reflect high TC_market in their respective activity domains.

The convergence of AI and programmable settlement alters both sides of this inequality in a coordinated way. Programmable settlement — through smart contracts, automated payment rails, and on-chain verification mechanisms — directly reduces TC_market by lowering search costs, eliminating settlement latency, automating enforcement, and enabling conditional payments tied to verifiable outputs. AI simultaneously reduces the minimum cognitive capability required to perform complex tasks, effectively reducing the asset-specificity premium that previously made external contracting for knowledge work prohibitively costly. As AI tools normalize output quality across a wider population of practitioners, the contracting problem for knowledge work becomes more tractable: outputs become more verifiable, quality distributions narrow, and opportunism risk diminishes.

The combined effect is that the inequality TC_market > TC_internal narrows. Where it narrows sufficiently, selective externalization — the modularization of previously internalized functions to external networks of AI-enabled operators — becomes economically rational. The firm does not disappear in this logic; it reconstitutes as an orchestration layer. Its strategic core — the activities that involve proprietary judgment, irreplaceable relationships, and genuine asset specificity — remains internal. Its standardizable outputs, previously justified as internal by high TC_market, migrate to organized external markets.

This does not mean all firms modularize simultaneously or to the same degree. Regulatory constraints, trust costs, reputational capital, and organizational inertia will produce substantial heterogeneity in the pace and extent of reconstitution. Firms in highly regulated industries, those whose outputs are difficult to verify externally, and those with deep institutional relationships built on employed labor will modularize more slowly. But the direction of the equilibrium force is clear: as TC_market falls toward a low bound driven by programmable settlement and AI-enabled verification, the optimal organizational form shifts away from the large integrated employment structure and toward the curated production network.

The Counter-Hypothesis and Its Limitations

Analytical credibility requires acknowledging the strongest version of the counter-argument. If AI generates sufficient demand for new categories of labor — roles that do not exist today, tasks that emerge from the productivity gains themselves, or complementary human skills that AI diffusion elevates in value — then the net effect on employment may be positive, the redistribution thesis may be overstated, and the firm reconstitution dynamic may be more incremental than structural. This is not an empty argument; prior general-purpose technology waves did generate new employment categories that partially or fully absorbed displaced workers over multi-decade horizons.

The historical analogy, however, has important limits in the present context. Prior waves primarily automated routine, codifiable tasks, leaving high-skill cognitive work as the destination sector for displaced workers. The current wave is compressing the destination sector itself. The adjustment mechanism that operated in prior transitions — upskilling into cognitive labor — is less available when cognitive labor is itself the object of compression. New roles will emerge; the question is whether they will emerge at sufficient scale, in accessible geographies, and at sufficiently compressed wage premia to prevent significant distributional stress in the interim. This manuscript treats the counter-hypothesis as a meaningful qualifier on the pace and intensity of structural change, but not as a refutation of its direction.

The productivity claims associated with AI adoption vary widely across enterprises, sectors, and methodologies. Pilot-study results, vendor benchmarks, and academic experiments occupy different positions in the distribution of reported outcomes, and credible institutional analysis requires a framework that distinguishes structural signal from implementation noise. This section develops the microeconomic foundations of intelligence compression — the process by which AI integration reduces the effective labor input required for a given bundle of cognitive tasks — and situates empirical observations within that framework.

The Compression Model

Consider an enterprise or practice unit whose output is defined by a task bundle T. This bundle consists of the set of cognitive activities required to produce the unit’s deliverable — analysis, drafting, synthesis, classification, structured reasoning, and the associated coordination and quality-review activities. In the pre-AI baseline, the labor input required to complete T is L₀, measured in full-time equivalent hours or equivalent cost units. Following AI integration, the effective labor input falls to L₁. The raw compression ratio is:

Δ = (L₀ − L₁) / L₀

This is the fraction by which labor input has been reduced holding output quality constant. Δ captures the theoretical productivity gain available from AI tooling for this task bundle, under conditions of full adoption and frictionless implementation.

In practice, Δ is not the directly deployable measure of productive surplus, for two reasons. First, not all of the compressed labor input translates directly into usable output. Productivity gains may be partially consumed by quality review cycles, model prompting overhead, error correction, and the organizational learning costs of integrating AI into existing workflows. This modulation is captured by the parameter φ (phi), the usable-output factor, defined as the fraction of raw AI-generated output that meets delivery standards without additional substantial labor investment. φ ranges from 0 to 1, with higher values indicating more mature and well-integrated AI deployment.

Second, the conversion of raw labor compression into headcount or cost reduction is not mechanical. Enterprises face adjustment costs, contractual obligations, regulatory constraints, and the legitimate need to retain surge capacity and institutional knowledge. The fraction of gross compression that materializes as realized cost reduction or redeployable capacity is captured by ε (epsilon), the headcount elasticity — the degree to which labor input can actually be reduced or redeployed in response to the compression available.

The effective compression rate, representing the portion of labor cost that can be deployed as productive surplus, is therefore:

LC = ε × (Δ × φ)

And the annual surplus generated at the enterprise level, as introduced in Section 1, is:

S = LC × C₀

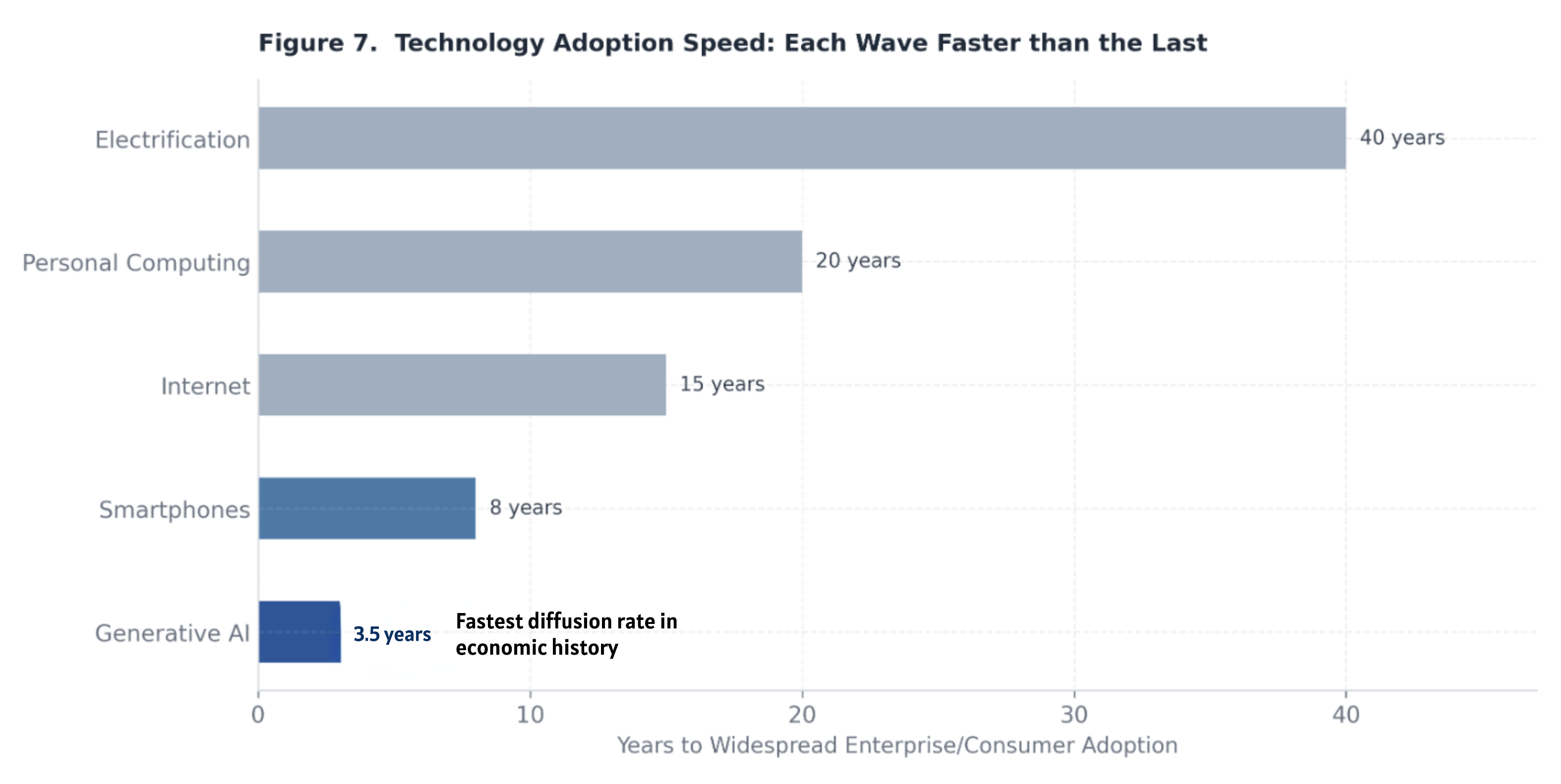

Empirical Calibration of Parameters

Enterprise pilot observations across early adopters in professional services, financial analysis, software engineering, and structured knowledge work provide an initial empirical calibration of these parameters, acknowledging that this evidence base is early-stage and will expand and refine over the medium term.

Raw compression ratios Δ in well-designed pilots are typically observed in the range of 0.25 to 0.35 — meaning that AI tooling reduces effective labor input for the targeted task bundle by 25 to 35 percent under close-to-optimal conditions. This range is consistent across multiple independent assessments of coding assistance, contract review, financial document analysis, and research synthesis, suggesting that a central estimate of approximately 30 percent is a reasonable baseline for Δ in high-applicability task bundles.

The usable-output factor φ is more variable and more dependent on implementation quality. In mature deployments with well-developed prompting architectures, output quality calibration, and integration into existing review workflows, φ is observed in the range of 0.6 to 0.7. In less mature deployments — where model outputs require substantial review and correction, or where integration friction is high — φ may fall to 0.5 or below. A central estimate of approximately 0.6 to 0.65 reflects the current state of enterprise deployment capability.

The headcount elasticity ε reflects organizational and contractual realities and varies considerably by sector. In flexible-staffing environments — consulting project teams, legal matters staffed on a case basis, software engineering squads — ε may approach 0.6 to 0.7 as headcount decisions are revisited on a project-by-project basis. In environments with high fixed employment costs, regulatory constraints on workforce adjustment, or strong organizational norms against rapid headcount changes, ε may fall to 0.4 to 0.5.

Substituting central estimates: LC = 0.60 × (0.30 × 0.65) ≈ 0.117, or roughly 12 percent effective compression in a typical early-deployment scenario. Under more favorable conditions — higher Δ from well-matched task bundles, φ approaching 0.70, ε at 0.65 — LC can reach approximately 0.20, or 20 percent effective compression. This range of 12 to 20 percent in early deployments is the empirically grounded anchor for near-term surplus modeling, with the expectation that Δ will rise as AI capability improves, φ will rise as organizational integration matures, and ε will rise as labor market adjustment mechanisms adapt.

What Intelligence Compression Reduces

The most structurally significant effect of intelligence compression is its impact on the leverage pyramid — the organizational model in which senior practitioners’ time and judgment are amplified through layers of subordinate labor performing structured analysis, document preparation, and preliminary synthesis. This model has been the productive core of professional services, investment banking, management consulting, and legal advisory for decades. Its economic logic depends on the assumption that junior labor is cheap relative to senior labor and that the ratio of junior-to-senior output is high enough to justify the pyramid structure.

AI compression attacks this assumption directly. When AI tooling can perform 30 percent or more of the structured analysis, drafting, and synthesis that occupies junior labor, the optimal pyramid ratio falls. The senior practitioner remains essential; the volume of junior labor required to support each senior practitioner does not. The immediate practical consequence is that revenue per senior FTE rises as the same deliverable requires less subordinate input. The strategic consequence is that firms organized primarily around the leverage pyramid face a structural challenge to their core economic mechanism, not a temporary headcount adjustment.

Intelligence compression also accelerates output. The same team, with AI tooling well integrated, produces more deliverables per unit of time. This output acceleration initially manifests as margin expansion for early adopters — more revenue per dollar of labor cost. The medium-term dynamic, however, is competitive price compression: as AI-native entrants calibrate their pricing to the lower cost structure that AI tooling enables, and as incumbent adoption catches up, the productivity surplus is competed away in the form of lower prices to clients rather than retained as profit. The firm that captures enduring surplus from intelligence compression is not the one that adopts AI earliest, but the one that uses the early adoption window to build organizational capabilities, client relationships, and service architectures that are not easily replicated.

Reinterpreting the Production Function

The standard treatment of productivity-enhancing technology in macroeconomic models is to increase total factor productivity A in a Cobb-Douglas or similar production function, leaving the factor substitutability between labor (L) and capital (K) unchanged. This treatment is inadequate for the present case, because AI does not merely make labor and capital jointly more productive; it alters the substitutability between them.

A more appropriate formulation recognizes that AI capital (K_AI) can substitute for a portion of human labor, producing an effective labor input:

L̃ = L(1 − Δ) + θ · K_AI

Here, L(1 − Δ) is the residual human labor input after AI compression, and θ represents the effectiveness with which AI capital substitutes for human labor in the task bundle — effectively the productivity of AI capital relative to the human labor it displaces. K_AI is the stock of AI capital deployed, encompassing model access, fine-tuned systems, agent infrastructure, and the organizational capital required to deploy these tools effectively.

The critical macroeconomic parameter in this formulation is the elasticity of substitution between human labor L and AI capital K_AI. If this elasticity exceeds unity — if AI capital and human labor are gross substitutes — then as AI capital becomes cheaper, labor’s share in production declines structurally, not merely cyclically. If the elasticity is below unity — if AI capital and human labor are gross complements — then falling AI costs raise the marginal product of human labor, and labor’s share may be maintained or increased even as the capital-to-labor ratio rises. The weight of current empirical evidence, though preliminary, leans toward substitutability exceeding complementarity for the task bundles most directly in AI’s current capability range — structured cognitive work, analysis, and synthesis. For tasks requiring embodied judgment, complex interpersonal interaction, and high-stakes irreversible decision-making, complementarity may dominate. The practical implication is that labor market impacts will be highly differentiated by task type, with substitution effects concentrated in the structured cognitive tier and complementarity effects concentrated at the highest level of judgment-intensive work.

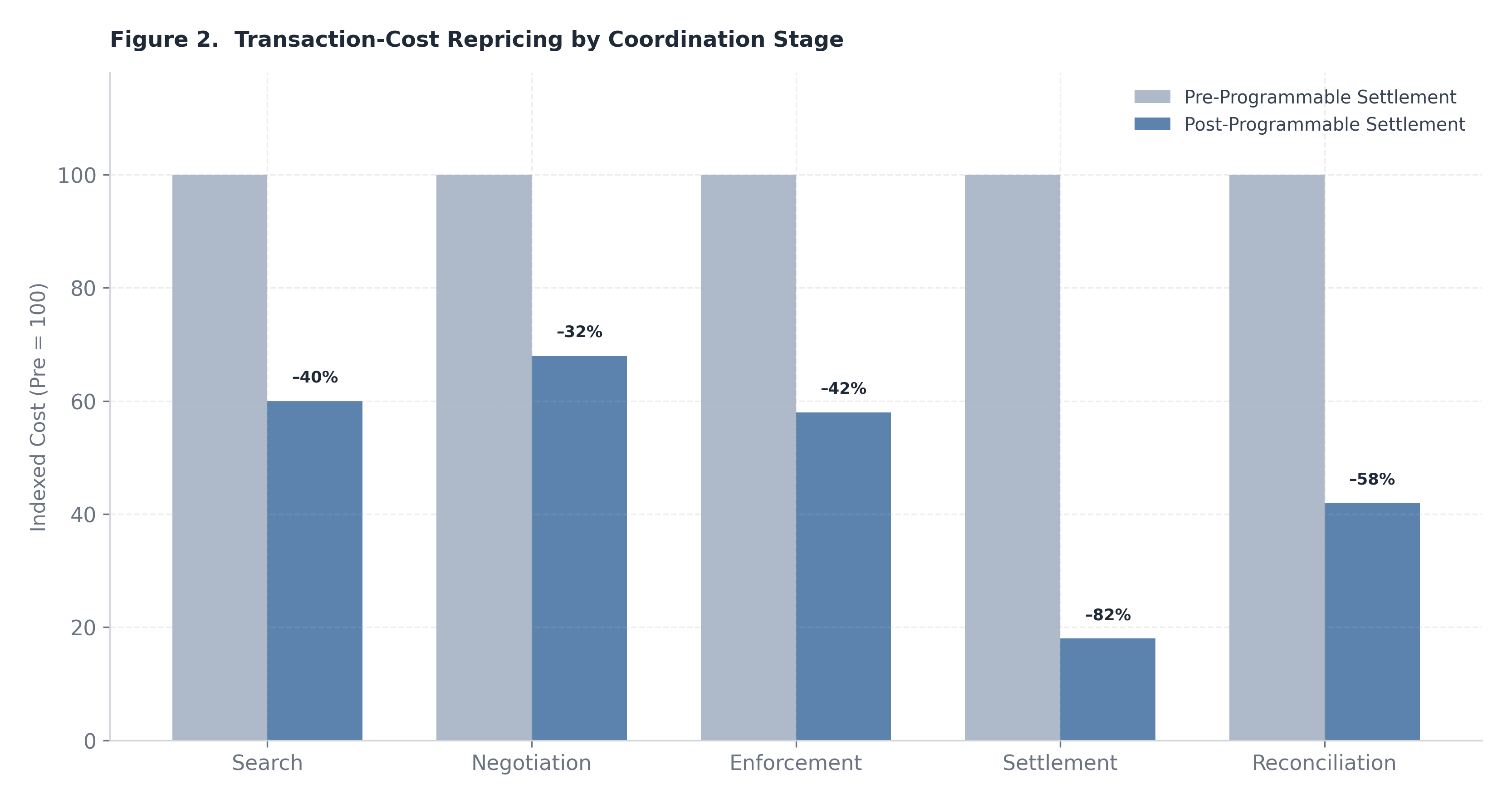

Figure 2. Indexed transaction-cost compression by stage, with pre-programmable settlement baseline normalized to 100.

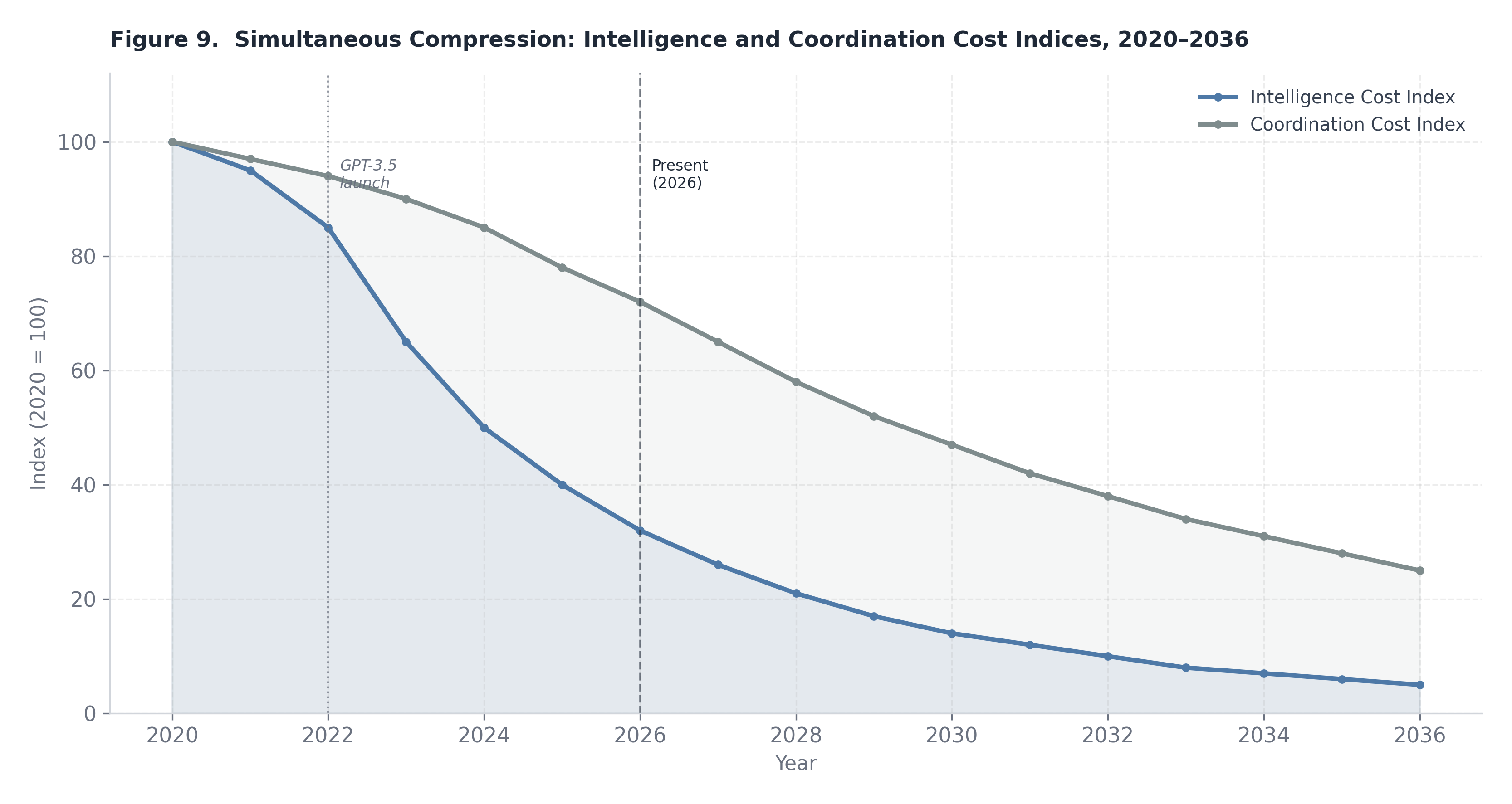

Figure 9. Both intelligence cost (AI-driven) and coordination cost (programmable settlement-driven) indices decline from a 2020 baseline of 100. Their simultaneous compression is the defining structural dynamic of the Convergence Economy.

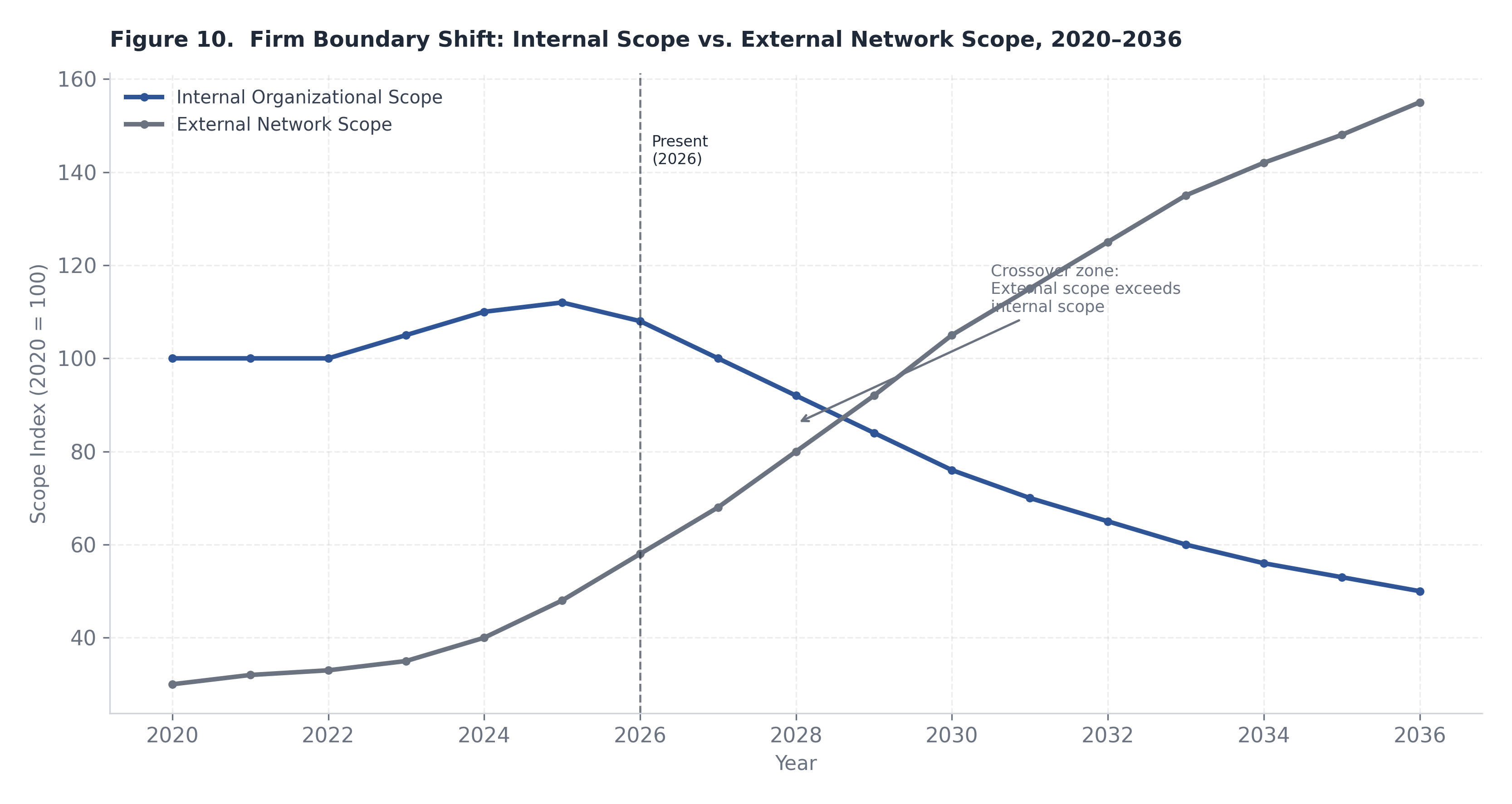

Figure 10. As dual compression proceeds, internal organizational scope peaks and declines while external network scope expands. The crossover marks the transition from hierarchical to orchestration-based firm architecture. Transaction costs are not a monolithic category. They are composed of distinct cost elements that arise at different stages of the coordination process, and each element is affected differently by the infrastructure changes now underway. A rigorous treatment of coordination compression requires disaggregating transaction costs into their constituent components, analyzing the magnitude of reduction achievable in each, and then assessing the aggregate effect on the firm boundary and the enterprise architecture.

The canonical taxonomy of transaction costs encompasses five stages: search (the cost of identifying suitable counterparties and assessing their capabilities and reliability); negotiation (the cost of reaching agreement on terms, conditions, and contingencies); enforcement (the cost of ensuring that contracted obligations are fulfilled); settlement (the cost of completing the financial transfer and achieving finality); and reconciliation (the cost of verifying, recording, and matching transaction records across the parties involved). In the pre-programmable-settlement environment, all five stages carry substantial friction, particularly for transactions that cross institutional, jurisdictional, or temporal boundaries.

Settlement Latency and Counterparty Exposure

The most directly quantifiable reduction from programmable settlement is in settlement latency. Conventional cross-institutional financial settlement — whether for contractor payments, intercompany transfers, supply chain disbursements, or royalty flows — operates on cycles ranging from one to five business days in most major markets, with cross-border transactions potentially extending further. This latency creates a counterparty exposure window during which one or both parties bear risk of default, operational failure, or dispute without recourse to finality. For large transactions, this exposure is typically managed through credit facilities, collateral requirements, and correspondent banking relationships — all of which carry costs that are embedded in the effective transaction price.

Programmable settlement on blockchain or distributed ledger infrastructure collapses this latency to near-zero for a growing class of transactions. The settlement of stablecoin transfers, tokenized payment obligations, and smart-contract-triggered disbursements can achieve finality in seconds rather than days. The practical consequence is not merely speed; it is the elimination of the counterparty exposure window and the associated credit risk management overhead. For enterprises that process large volumes of relatively standardized payments — contractor disbursements, milestone-based professional service payments, revenue shares — the reduction in working capital lockup, credit facility utilization, and administrative management costs is meaningful and recurring.

Conditional Execution and Milestone-Based Contracting

Beyond settlement speed, programmable settlement enables conditional execution — the automatic release of payment contingent on the verification of specified conditions, without the need for human intervention at the trigger point. This is particularly consequential for the coordination of distributed micro-enterprise networks, where the cost of manually verifying, approving, and processing a large volume of small payments represents a significant administrative overhead relative to payment size. In a traditional contracting model, this overhead creates an effective minimum transaction size below which outsourcing is administratively unviable. Programmable settlement removes this minimum, enabling the economic viability of micro-payments — payments for discrete, verifiable outputs of small unit value — at scale.

The architectural implication is that production networks can be structured around granular milestone verification rather than bulk periodic settlement. A research task completed, a document reviewed, a module tested, a translation verified — each discrete output can trigger an immediate conditional payment without requiring human approval at the individual transaction level. This shifts the locus of coordination cost from transaction processing to the design of verification conditions, which is a one-time fixed cost rather than a per-transaction variable cost. The amortization of this fixed cost across large volumes of transactions is what makes programmable settlement economically transformative for distributed production architectures.

Treasury and Working Capital Architecture

At the enterprise treasury level, programmable settlement creates both opportunities and new requirements. The reduction in float — the time during which payment is in transit and neither party has full access to the funds — reduces the working capital required to sustain ongoing operations at a given revenue level. Enterprises that currently maintain substantial cash buffers to manage payment timing mismatches can redeploy a portion of this capital to productive uses. In aggregate, across a large enterprise with complex payment flows, the reduction in working capital lockup from near-zero settlement latency can be material relative to the cost of capital.

However, real-time settlement also creates new requirements for liquidity management precision. In a T+2 or T+3 settlement environment, treasury functions manage liquidity at a daily or weekly level with substantial buffer capacity. In a real-time settlement environment, liquidity must be available at the point of transaction with greater precision, requiring more sophisticated cash positioning, automated liquidity sweeps, and real-time visibility into payment obligations and incoming receipts. This represents a net increase in treasury complexity even as it reduces working capital cost — a transition that requires investment in treasury technology infrastructure before the cost benefits are fully realized.

The emergence of multi-rail settlement environments — in which enterprises operate across conventional banking rails, domestic faster-payment systems, and blockchain-based stablecoin rails simultaneously — introduces redundancy requirements and reconciliation complexity. On-chain reconciliation tools that can match transaction records across rails in real time are becoming a necessary component of enterprise treasury infrastructure, representing both a cost and a capability investment.

Reconsidering the Firm Boundary

The combination of AI-enabled output normalization and programmable settlement cost reduction requires a systematic revision of the make-versus-buy analysis that underlies firm boundary decisions. The classical Coasian condition for internalization is:

Internalize when TC_market > TC_internal

As noted in Section 2, AI reduces TC_internal for many knowledge-intensive activities by lowering the effective cost of complex task completion. Simultaneously, programmable settlement reduces TC_market — the cost of contracting for external production — by lowering search, negotiation, enforcement, settlement, and reconciliation overhead.

The critical insight is that these two forces affect the two sides of the Coasian inequality asymmetrically across activity types. For the strategic core of an enterprise — activities involving proprietary judgment, irreplaceable institutional relationships, regulatory accountability, and genuine asset specificity — TC_internal falls modestly (AI accelerates delivery but does not alter the fundamental rationale for internalization), while TC_market may remain high (external contracting is infeasible or undesirable regardless of settlement costs). These activities remain internal.

For standardized, verifiable, output-definable activities that were previously internalized primarily because TC_market was prohibitively high — structured analysis, document production, code review, compliance monitoring, data processing — both TC_internal falls (AI reduces internal production cost) and TC_market falls (programmable settlement reduces external coordination cost). In this category, the optimal boundary becomes genuinely ambiguous and increasingly shifts toward selective externalization to AI-enabled micro-operators or specialized micro-enterprises.

This can be expressed formally as a condition on the optimal firm size S*, which is now a function of three variables: the AI substitution capacity across the activity bundle (which reduces required internal headcount), the transaction cost compression factor (which reduces TC_market for externalized activities), and the residual trust cost τ. Trust cost — the risk premium associated with contracting sensitive work to external parties where reputational, IP, or relationship risks are non-trivial — may remain substantial even as mechanical transaction costs approach zero. The observation that TC_market approaches a near-zero bound in some activity categories does not imply that optimal firm size converges to an extreme; trust costs, regulatory accountability, and the ongoing value of organizational culture and institutional memory act as meaningful countervailing forces.

International and Cross-Border Implications

The reduction in cross-border coordination costs has implications that extend beyond the domestic firm boundary into the geography of labor markets and global production networks. Stablecoin-based settlement enables real-time payment to contractors in any jurisdiction with network access, at near-zero transaction cost and without the currency conversion friction and correspondent banking overhead that has historically made micro-transactions across borders economically unviable. This fundamentally changes the feasibility calculus for global micro-enterprise networks.

The geographic wage arbitrage dynamic — the cost advantage of engaging high-skill labor in lower-wage markets — has existed since the early internet era but has been constrained by coordination friction, payment overhead, quality variability, and the minimum viable engagement size imposed by transaction costs. Programmable settlement reduces these constraints substantially, enabling the economic viability of smaller-scale, shorter-duration, cross-border engagements. The implication is that geographic wage arbitrage will accelerate as a production strategy in sectors where AI-normalized output quality removes the quality-verification barrier to cross-border engagement.

There is, however, a partial countervailing dynamic. AI tooling raises the productivity of practitioners in lower-wage markets, potentially enabling them to compete on output quality as well as price. As AI-enabled practitioners in emerging markets achieve output quality approaching that of high-cost-market incumbents, the wage differential sustaining arbitrage narrows — not because wages in high-cost markets fall, but because the productivity differential that justified paying them erodes. In the longer run, AI tooling may contribute to a productivity equalization that partially offsets the acceleration of geographic wage arbitrage, though the transition period is likely to be characterized by significant wage pressure in mid-tier knowledge work roles in high-cost markets before this equilibrium is approached.

The aggregate effect on the global distribution of knowledge-work income is therefore ambiguous at the margin, though its direction for high-cost-market junior-tier knowledge workers is directionally negative. The combination of programmable settlement removing coordination barriers and AI tooling removing quality-verification barriers to global micro-enterprise engagement represents a structural shift in the competitive environment for structured cognitive labor, one that will reward practitioners who build judgment-intensive capabilities while placing sustained pressure on those whose value proposition rests primarily on task execution.

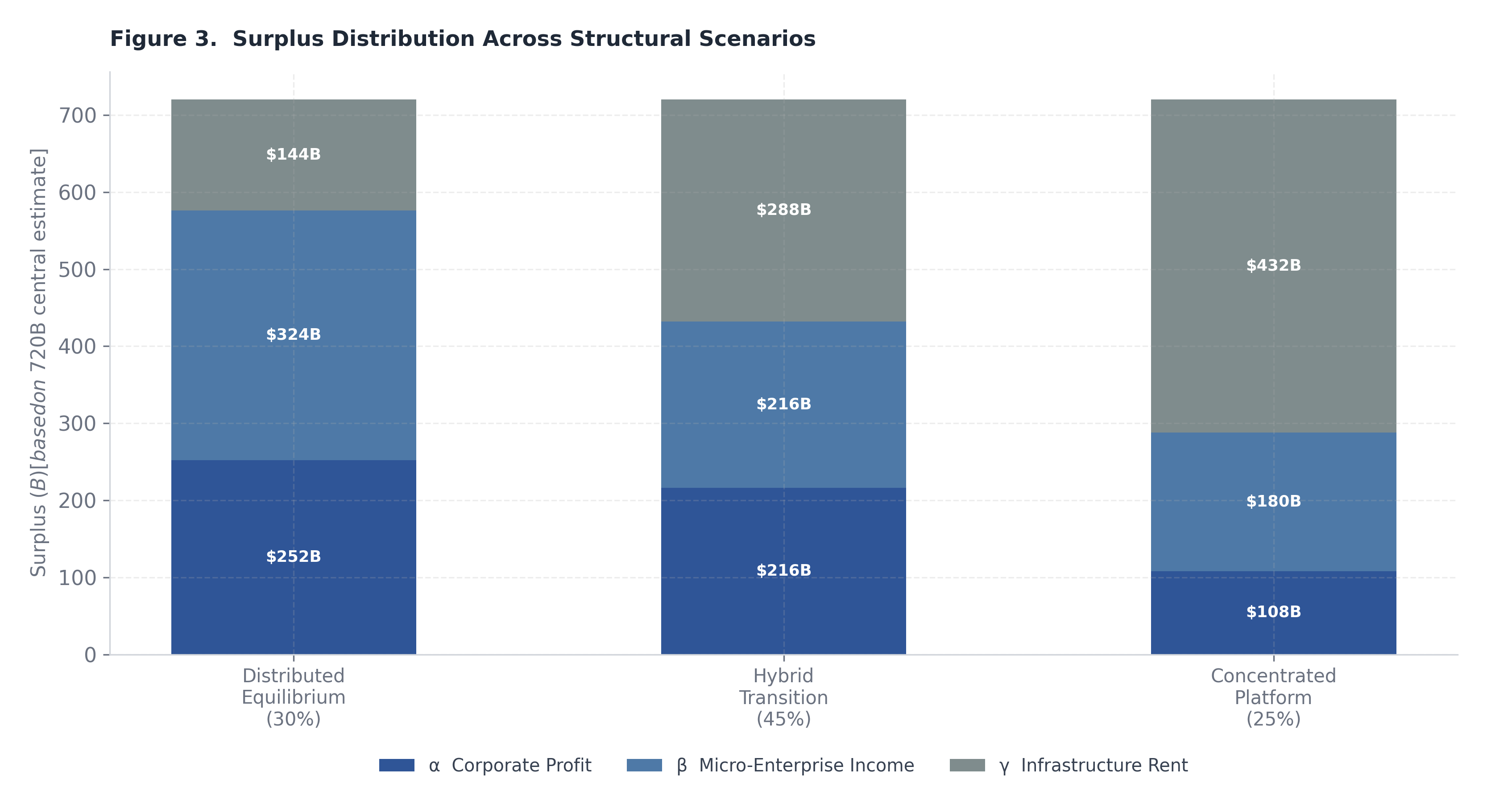

Figure 3. Dollar-value allocations of annual U.S. knowledge-economy surplus across corporate profit (α), micro-enterprise income (β), and infrastructure rent (γ) under three scenarios (based on ~$720B central estimate).

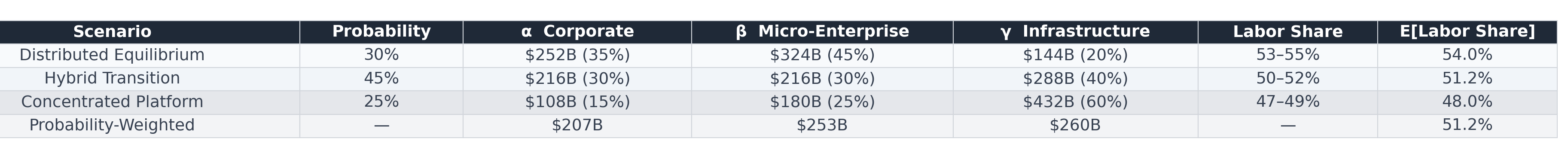

Table 2. Comparison of the three structural scenarios with probability weights, distribution coefficients (α/β/γ), dollar amounts, labor-share ranges, and probability-weighted expected outcomes.

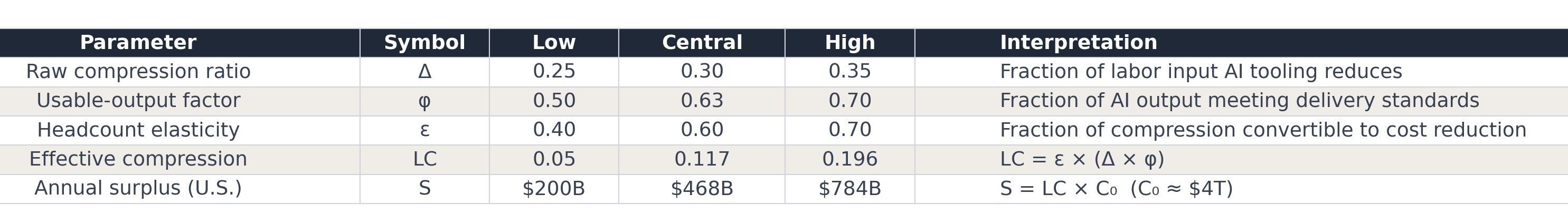

Any serious attempt to assess the macroeconomic consequences of AI-mediated labor compression must begin with a tractable model of surplus formation — that is, the economic value created when a unit of cognitive labor is displaced or augmented at below-replacement cost. The aggregate surplus generated across the knowledge economy can be expressed through a compact identity:

S = LC × C0

where S denotes the total annual surplus (measured in nominal dollars), C₀ represents the addressable knowledge-sector wage base, and LC is the labor compression coefficient — the fraction of that wage base that is effectively replaced or structurally augmented by AI-mediated processes over the relevant measurement horizon.

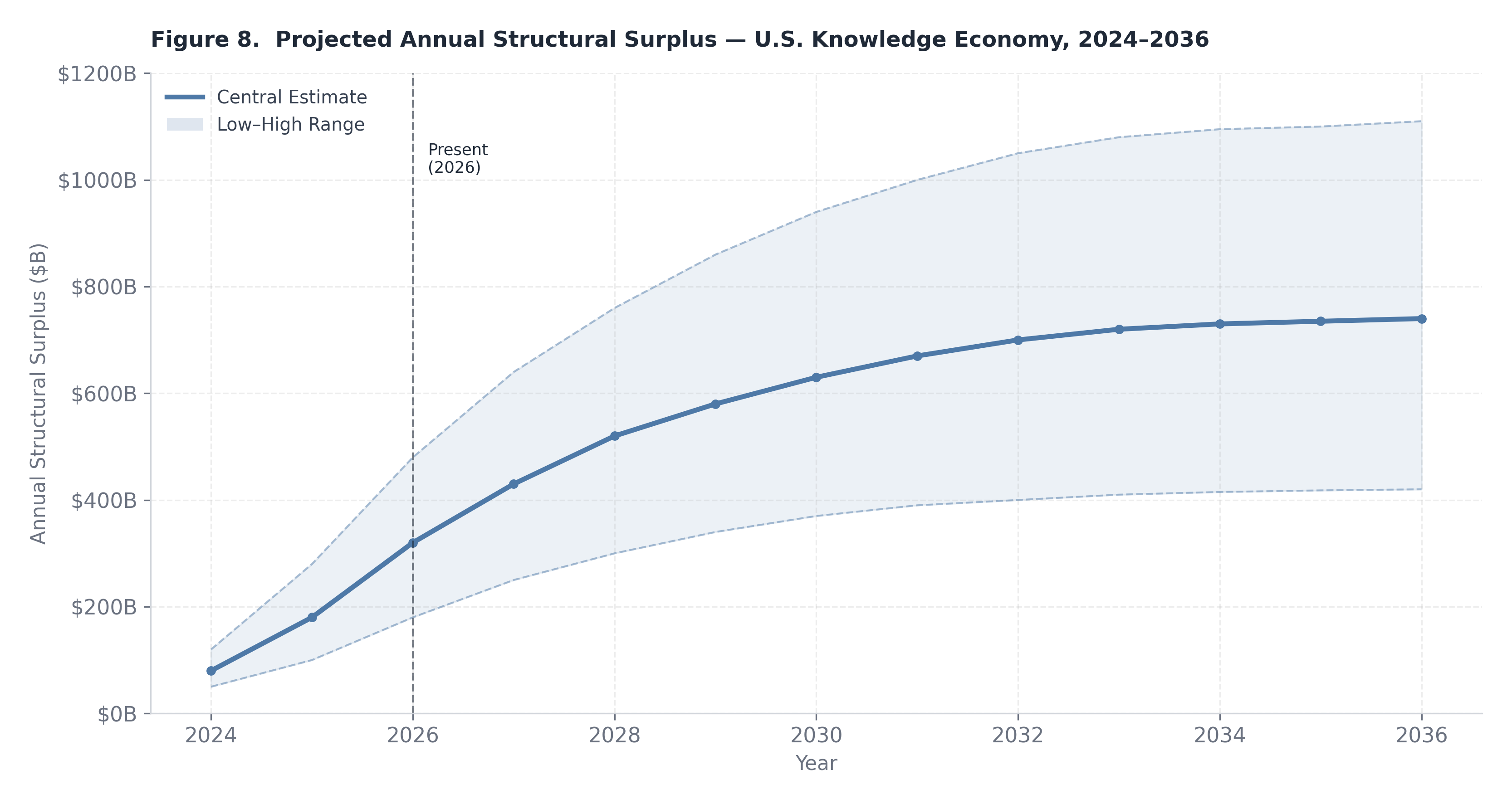

Calibrating against the U.S. knowledge economy, C₀ can be estimated at approximately $4 trillion annually, reflecting aggregate compensation across the broad professional, technical, managerial, and information-services workforce. This figure encompasses the wage mass of workers whose primary output is cognitive — analysis, synthesis, communication, decision support, documentation — rather than physical. Using a central-case labor compression coefficient of LC ≈ 18%, the aggregate surplus identity yields S ≈ $720 billion per annum. This is not a realized windfall waiting to be claimed; it is, rather, a structural displacement potential — an upper bound on the value at stake as firms, independent operators, and infrastructure platforms make competing claims on the efficiency gains embedded in generative AI deployment.

The compression coefficient LC is itself a derived quantity. It can be decomposed as a function of two underlying parameters:

LC = f(ε, Δ)

where ε is the task-level elasticity of AI substitution (the degree to which AI-generated output can functionally substitute for human-generated output within a given task category), and Δ is the adoption depth parameter (the fraction of addressable cognitive tasks within C₀ that are actually exposed to AI mediation within the horizon). The two parameters interact multiplicatively: high substitutability has limited surplus impact if adoption depth remains shallow, and vice versa.

Under a high-case parameterization — ε = 0.8 and Δ = 0.35 — the implied compression coefficient rises to approximately LC ≈ 28%, yielding S ≈ $1.1 trillion. This scenario reflects a world in which enterprise adoption accelerates beyond current rates, regulatory friction remains manageable, and frontier model capability continues to expand into previously hard-to-automate task categories. Under a low-case parameterization — ε = 0.5 and Δ = 0.20 — the coefficient falls to LC ≈ 10%, with S ≈ $400 billion. This scenario reflects slower institutional adoption, persistent quality gaps in AI output for high-stakes professional tasks, and organizational resistance to restructuring. The central case at $720 billion represents a considered midpoint, but the $700 billion range between the high and low cases underscores the profound sensitivity of distributional outcomes to parameters that remain genuinely uncertain.

Figure 8. Central estimate (teal) with low–high range band (shaded). The surplus is projected to exceed $700B annually by 2032 under the central scenario. Exact data from the modelling in Section 5.1.

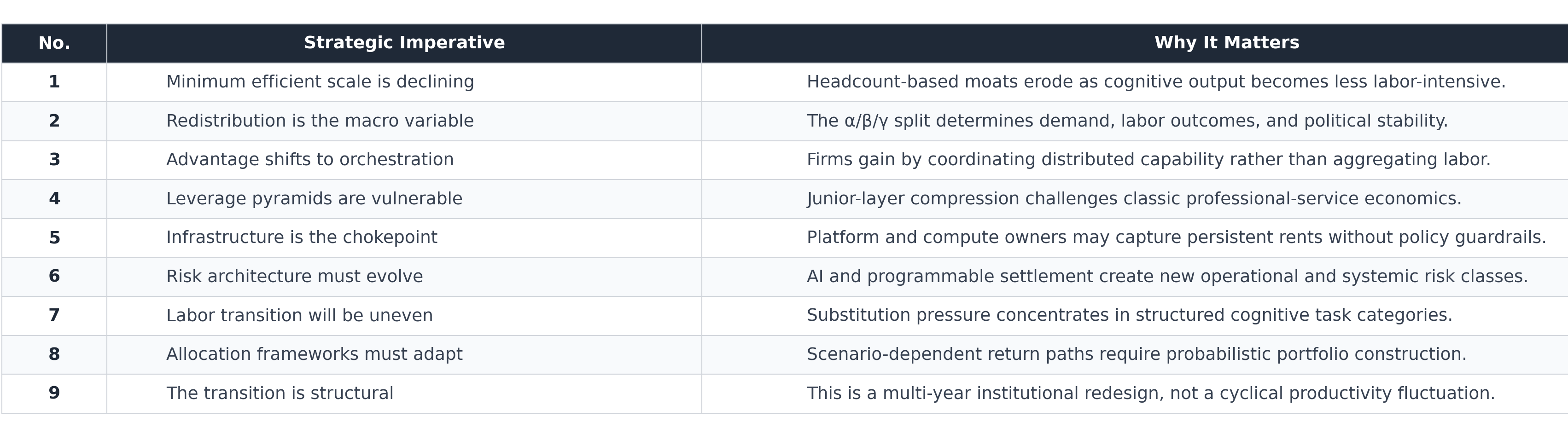

Surplus formation is only the first-order question. The more consequential question — with direct implications for income distribution, labor share dynamics, and political economy — is who captures the surplus once formed. Three distribution coefficients govern this allocation:

By construction, α + β + γ = 1. The three coefficients are not independent: the concentration of the AI infrastructure market, the design of procurement channels, the degree of open-model diffusion, and the architecture of regulatory oversight all systematically push the equilibrium toward different distributions. Three illustrative scenarios anchor the policy-analytic space.

Scenario 1 — Balanced Capture (α = 0.40, β = 0.30, γ = 0.30): In this scenario, no single agent class dominates the distribution. Corporate earnings grow modestly, independent operators find real but bounded income expansion, and infrastructure providers capture a significant but not overwhelming rent. Against the central-case surplus of $720 billion, this implies approximately $288 billion accruing to corporate earnings, $216 billion to micro-enterprise income, and $216 billion to platform infrastructure rent. This distribution is relatively benign from a labor share perspective: the micro-enterprise channel converts displaced wage income into self-employment income, preserving a meaningful share within the household sector. Political economy pressure is moderate, as the gains are sufficiently dispersed to limit concentrated backlash.

Scenario 2 — Infrastructure-Dominant Capture (α = 0.25, β = 0.15, γ = 0.60): This scenario describes a world in which a small number of compute and model platforms extract the dominant share of the surplus through pricing power, API monopoly, and network-effect moats. At $720 billion in total surplus, infrastructure providers capture approximately $432 billion — a remarkable concentration in an industry measured in dozens of material participants. Corporate profits are modest because firms face high AI input costs, and micro-enterprise formation is dampened because the economics of operating on top of expensive proprietary infrastructure are thin. This scenario most directly mirrors the historical pattern of industrial platform consolidation and is, arguably, the default trajectory absent deliberate countervailing policy. Its political economy consequences are severe: labor share declines, household income concentration rises, and the fiscal base erodes as infrastructure rents accumulate in entities with sophisticated tax optimization capacity.

Scenario 3 — Distributed AI Equilibrium (α = 0.35, β = 0.45, γ = 0.20): This is the most transformative distributional outcome. Independent operators and AI-augmented solo practitioners capture the largest single share — approximately $324 billion — while corporate incumbents retain a meaningful but not dominant portion and infrastructure rent is compressed by open-model diffusion and competitive supply. This scenario requires the proliferation of low-cost, high-quality open-source or open-weight foundation models, accessible fine-tuning infrastructure, and mature tooling ecosystems that allow individual operators to reach professional output quality at near-zero marginal cost. It also requires robust demand for micro-enterprise output, whether through consumer markets, enterprise procurement platforms that disaggregate vendor relationships, or new forms of project-based contracting. Labor share does not decline under this scenario; indeed, by converting displaced wage workers into independent income earners, the macro distribution may actually improve relative to the pre-AI baseline.

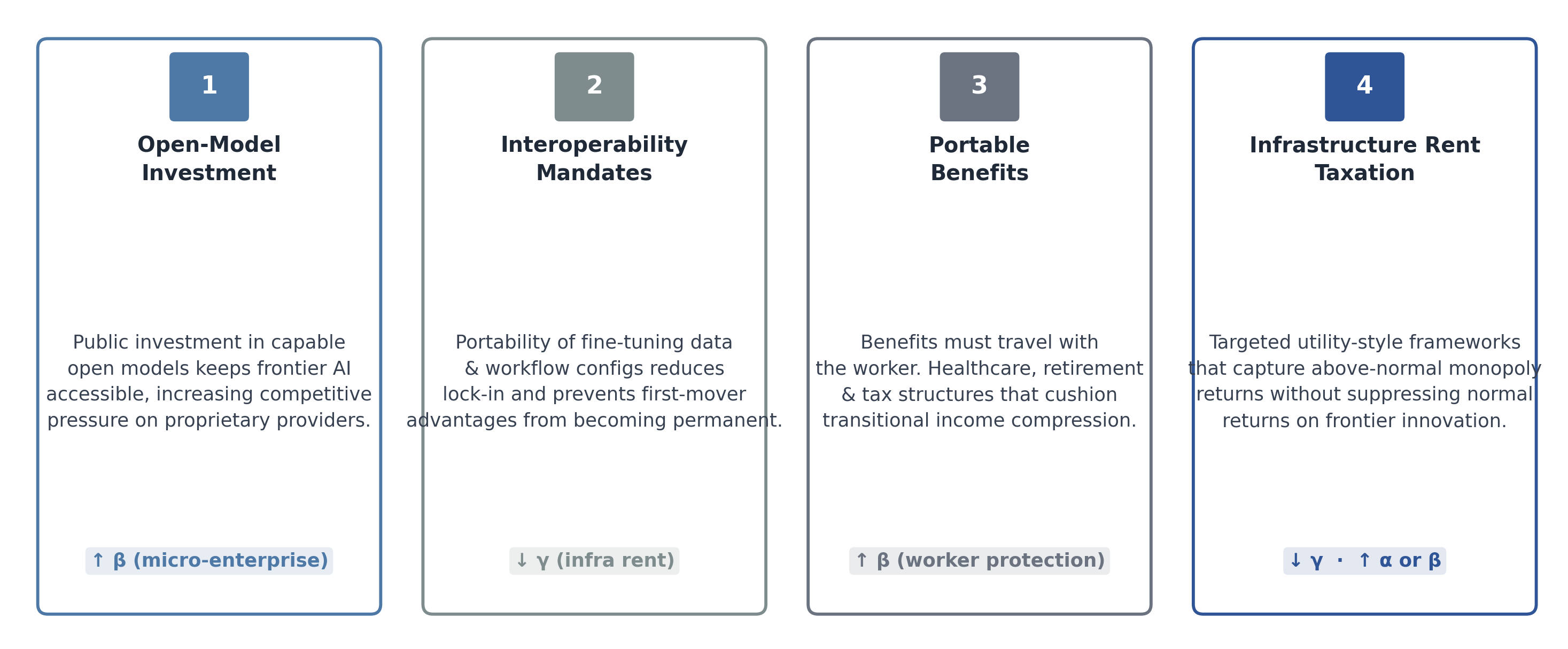

The three distribution coefficients are not exogenously fixed; they are endogenous to the institutional architecture of the AI economy. Four structural determinants are analytically primary. Infrastructure concentration is perhaps the most important: when compute and model access are controlled by a small number of vertically integrated providers, γ rises mechanically, compressing both α and β. Procurement design — specifically, whether large enterprises purchase AI output from integrated vendors or through competitive micro-procurement markets that expose independent operators — is the second determinant. Open-model diffusion, accelerated by the release of competitive open-weight models, is the third: as the frontier capability gap between proprietary and open models narrows, infrastructure rents are disciplined and β rises. Regulatory architecture — particularly around data access, interoperability mandates, and API governance — shapes the underlying competitive dynamics that determine all three coefficients simultaneously.

There is historical precedent for a significant lag between demonstrated technological capability and measurable macroeconomic productivity growth. The original Solow paradox — the observation that computers were “everywhere except in the productivity statistics” — persisted for well over a decade before the 1990s acceleration. Several structural mechanisms create an analogous risk for the current AI transition. First, if the surplus is captured primarily as corporate margin expansion rather than output expansion, measured GDP growth does not necessarily accelerate proportionally: the same nominal output is produced at lower cost, improving profitability without adding value to the national accounts. Second, a significant fraction of AI productivity gains accrues through quality improvements in professional outputs — more rigorous analysis, faster iteration, reduced error rates — that are not captured by output price deflators calibrated against legacy product categories. Third, the micro-enterprise channel, which may absorb a large fraction of the surplus under the distributed equilibrium scenario, is poorly tracked in national statistics: self-employment income is under-measured, productivity growth in the unincorporated business sector is imputed rather than directly observed, and platform-mediated gig work sits in measurement grey zones. The practical implication is that policymakers and central bankers should expect a period in which AI-driven surplus is economically real but statistically invisible — and should resist interpreting measured productivity stagnation as evidence of technological underperformance.

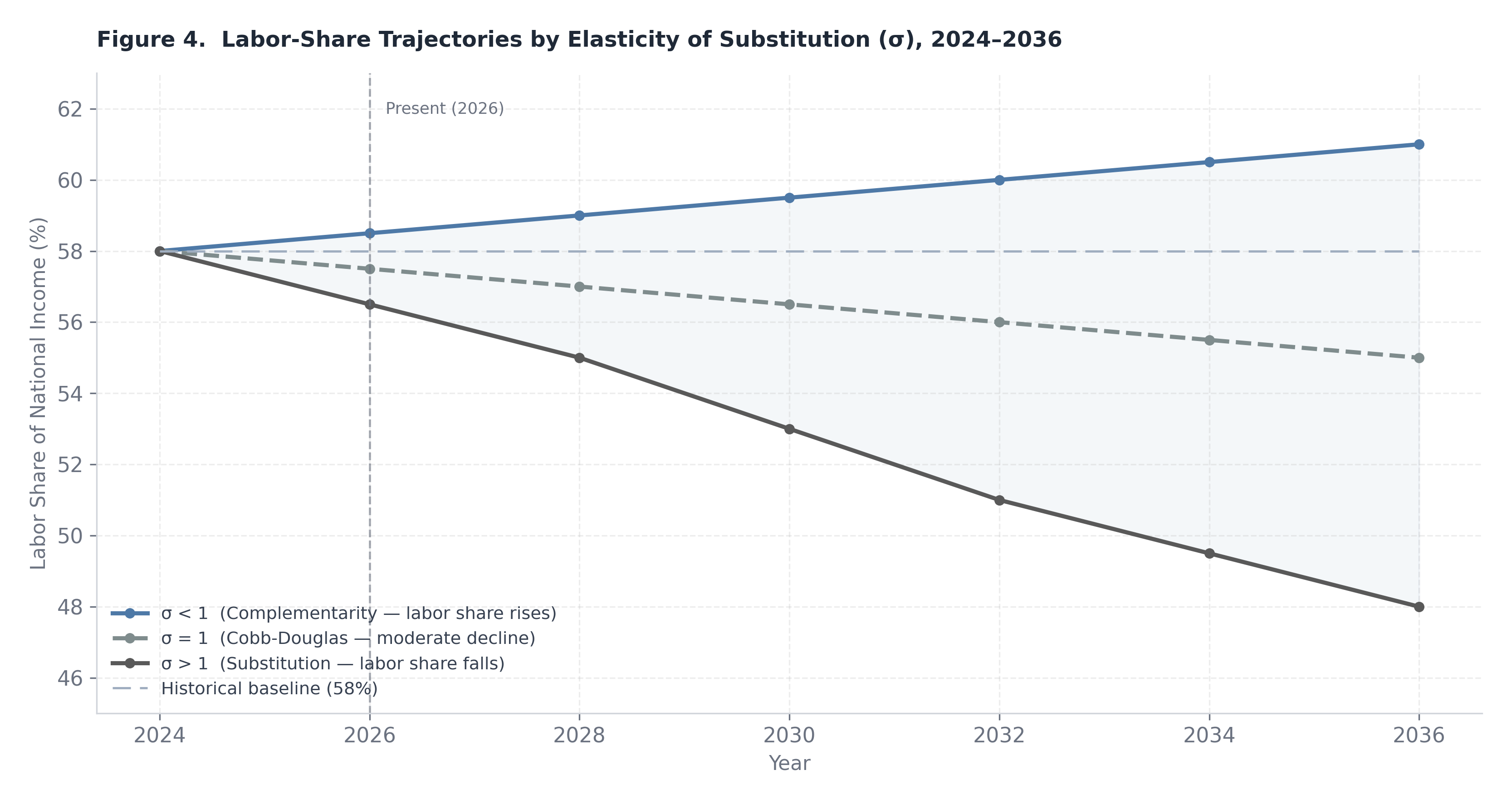

Figure 4. Scenario-based labor-share trajectories under distributed, hybrid, and platform-dominant equilibria.

A rigorous analysis of the Convergence Economy’s long-run distributional consequences requires a production-function framework capable of representing the substitutability relationship between AI capital and human labor. The standard CES (constant elasticity of substitution) production function provides the appropriate vehicle:

Y = A[αK(KAI)ρ + αL ⋅ Lρ]1/ρ

where Y is aggregate output, A is total factor productivity, K_AI is the stock of AI-embodied capital (including compute infrastructure, trained model weights, and AI-augmented software systems), L is effective labor input, α_K and α_L are distribution parameters governing the relative intensity of each factor, and ρ is the substitution parameter. The elasticity of substitution between AI capital and labor is then given by:

σ = 1/(1−ρ)

The value of σ is the pivotal structural parameter for labor-share dynamics. When σ > 1, capital and labor are gross substitutes: as AI capital becomes cheaper relative to labor, firms substitute toward it, and the labor share of output declines. When σ < 1, capital and labor are gross complements: increased AI capital deployment raises the marginal product of labor, driving wages up, and the labor share is approximately stable or potentially rises. When σ = 1, the production function collapses to the Cobb-Douglas form, in which factor shares are determined entirely by the distribution parameters and are independent of relative factor prices.

The empirical question of whether σ exceeds or falls short of unity is neither settled by theory nor by existing empirical estimates, which vary substantially by sector, skill tier, and task granularity. The Convergence Economy’s distinctive feature is that it does not impose a single regime: different sectors and different task categories face different substitutability environments, and the aggregate economy-wide σ is best understood as a capacity-weighted average across a heterogeneous sector distribution.

Two limiting regimes define the analytic space. Under complementarity dominance (σ < 1), AI capital deployment raises the effective productivity of each unit of labor rather than replacing it. An attorney who can perform exhaustive case-law research in minutes rather than hours is more productive, not redundant; a financial analyst whose modeling time compresses from days to hours can cover more clients, generate more insights, and command a wage premium reflecting the expanded scope of deliverables. In this regime, the labor productivity term in the production function rises faster than AI capital deployment compresses headcount demand, and wages increase proportionally with productivity. The labor share of output remains approximately stable, and the primary distributional effect of AI is to shift the wage distribution toward skill tiers that complement AI output — creating significant within-labor inequality even as the aggregate labor share holds.

Under substitution dominance (σ > 1), AI capital is deployed to reduce headcount rather than augment it. The marginal cost of an AI-generated deliverable falls below the marginal cost of a human-generated equivalent, and rational firms substitute toward the cheaper input. Labor demand declines at the margin, wage bargaining power weakens as the outside option for employers (AI replacement) becomes credible and affordable, and the capital income share rises at the expense of labor. This regime does not require mass layoffs or dramatic short-run displacement; it can manifest as a persistent structural slowdown in employment growth in affected sectors, wage stagnation below productivity growth, and an expansion of corporate margins that is systematically not passed through to compensation.

The Convergence Economy almost certainly produces mixed regimes distributed unevenly across the sectoral landscape. Infrastructure and platform sectors — compute, logistics, software development, financial operations — are plausibly substitution-heavy, as the primary value of AI in these domains is cost reduction and throughput expansion rather than quality enhancement. Relational and judgment-intensive sectors — complex legal advocacy, executive advisory, healthcare diagnosis, high-touch client management — are more plausibly complementarity-heavy, as human judgment, relational trust, and contextual authority retain scarcity value that AI output cannot fully replicate at current capability levels. The aggregate distributional outcome will be determined by which sector group expands and which contracts as a share of economic activity over the decade ahead.

The Convergence Economy introduces a structural mechanism that distinguishes it from prior waves of labor-displacing technology: the simultaneous compression of both cognitive labor costs and transaction costs. Prior automation waves — numerical control manufacturing, enterprise resource planning, early software — primarily compressed routine manual and clerical labor, leaving the professional-managerial wage mass relatively intact and indeed expanding it as organizational complexity increased. The current transition attacks at a different stratum. Knowledge work — analysis, documentation, synthesis, compliance, and research — constitutes the core of the white-collar wage mass, and it is precisely these task categories where current-generation AI systems demonstrate the highest effective substitutability.

This matters for aggregate labor-share dynamics because the white-collar wage mass represents a disproportionate share of total compensation. The United States labor share of GDP has declined from approximately 60 percent of national income in the mid-twentieth century to the 53–55 percent range in recent years, a trend conventionally attributed to globalization, offshoring of manufacturing, the automation of routine production labor, skill-biased technological change, and capital deepening. The Convergence Economy augments this secular decline with a new compression mechanism that operates on precisely the labor tiers that had previously been insulated from displacement: the cognitive, credentialed, and higher-income professional workforce. If AI-driven productivity gains in these tiers accrue primarily to corporate margins rather than to labor compensation or independent income generation, the labor share trajectory worsens materially from an already historically depressed baseline.

The structural fork is therefore as follows: if AI primarily raises corporate margins in professional services and information industries — through headcount reduction, pyramid compression, and per-unit cost deflation — the labor share declines further, income concentration rises, and the political economy of redistribution intensifies. If, alternatively, AI enables a proliferation of distributed operator income — allowing former analysts, associates, and junior professionals to operate as independent practitioners at near-incumbent output quality — then the labor share can be approximately preserved, though the vehicle shifts from wage employment to self-employment income. This is not merely a productivity story. It is a distributional bargaining story, and the outcome depends on structural features of the market that lie outside the production function itself: procurement norms, platform governance, professional licensing architecture, and the degree of open-model access.

Even under scenarios where the long-run equilibrium is relatively benign — a distributed AI economy with moderate labor share stability — the transition path involves frictions that can impose substantial and unevenly distributed short-run costs. Retraining lags are the most analytically tractable: the skills required to operate as an effective AI-augmented independent practitioner — prompt engineering fluency, workflow design, quality validation, client positioning — are neither trivially acquired nor uniformly distributed across the incumbent workforce. Older workers, workers in geographically concentrated industry clusters, and workers in highly credentialed professions with strong licensing barriers face the highest transition costs and the longest adjustment horizons. Geographic immobility compounds this dynamic: the sectors most exposed to AI compression — professional services, financial operations, legal documentation, software — are geographically concentrated in a small number of metropolitan areas, and the alternative income opportunities available in those areas may be more limited than aggregate employment statistics suggest. Benefits structure rigidity introduces a third friction: employer-sponsored health insurance, defined-benefit pension residuals, and other firm-linked benefits create implicit lock-in that delays voluntary transition to independent operator status even where the income opportunity is demonstrably superior.

The macroeconomic policy implications of these dynamics are consequential and likely underappreciated by current institutional frameworks. In the near to medium term, AI-driven cost compression in professional services will exert sustained downward pressure on the price of knowledge-intensive outputs: legal fees, consulting rates, software development costs, and financial advisory margins will face deflationary pressure. For central banks, this service-sector disinflation may initially read as a favorable supply-side shock — declining inflation with stable or rising output — consistent with durable productivity expansion. The risk is that monetary authorities mistake margin-driven price stability for genuine productivity acceleration, maintain accommodative stances longer than warranted, and fail to build in sufficient slack for the eventual realization that measured productivity growth has not matched the financial market valuations that the AI narrative has supported.

On the fiscal side, the distributional consequences of the Convergence Economy create two distinct challenges depending on which scenario materializes. If capital concentration increases and the infrastructure-dominant scenario prevails, fiscal redistribution pressures intensify rapidly: wealth concentration accelerates, the wage tax base erodes, and the political economy around wealth and capital income taxation resurfaces with renewed urgency. Meaningful policy responses would include infrastructure rent taxation, AI-specific capital income surtaxes, and productivity dividend mechanisms designed to return a portion of AI-generated surplus to displaced workers. If, alternatively, the micro-enterprise proliferation scenario prevails, the fiscal challenge shifts toward the revenue base: a large and growing self-employed population generates income through platforms, project markets, and direct client relationships that are substantially more difficult to observe and tax than wage income. Compliance modernization — real-time reporting infrastructure, platform withholding requirements, and streamlined estimated tax systems — becomes a fiscal priority not as a punitive measure but as an enabling condition for sustainable public finance in a distributed income economy.

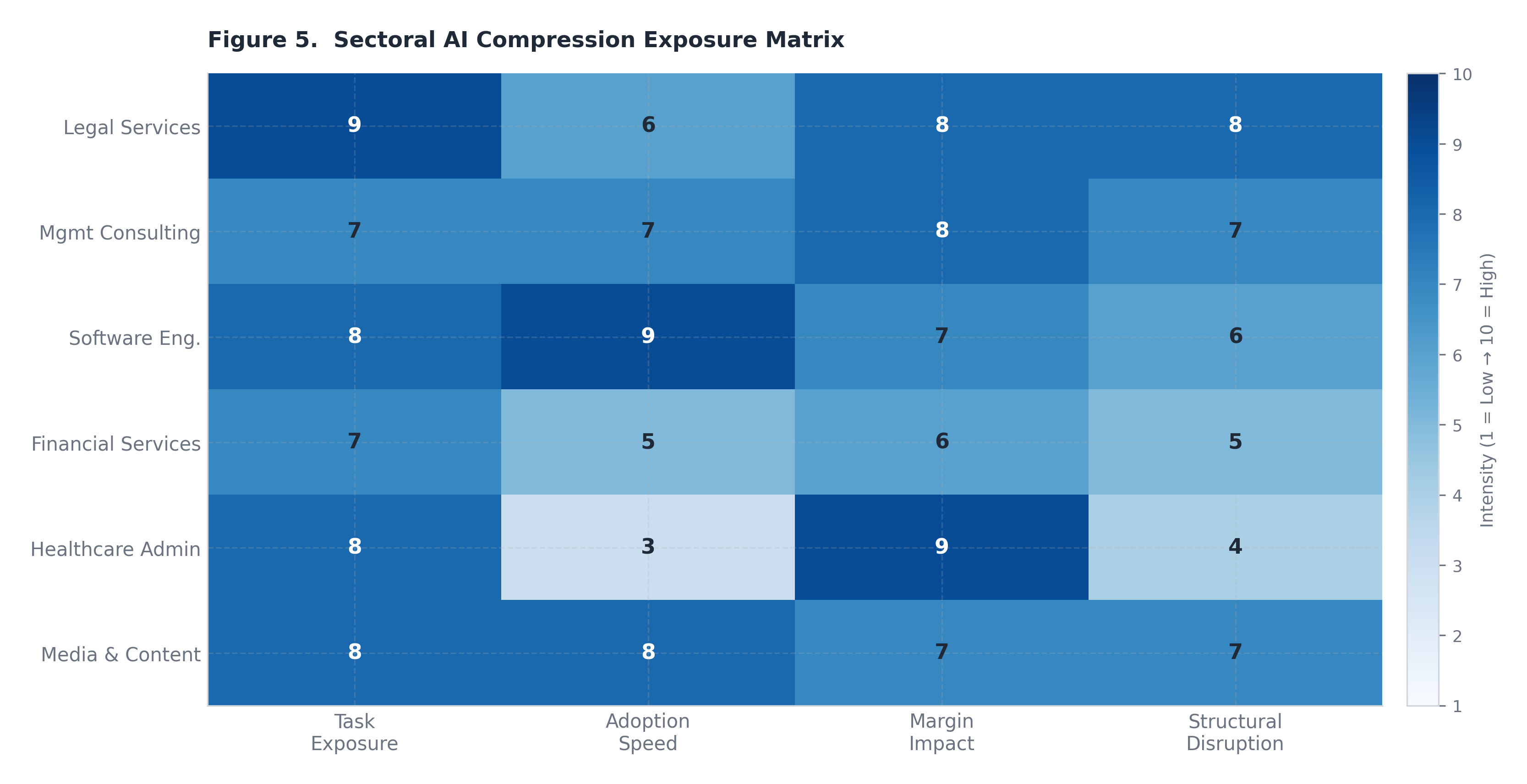

Figure 5. Relative sectoral intensity scores for AI compression exposure, settlement leverage, regulatory constraints, and transition friction. The microeconomic and general equilibrium dynamics analyzed in the preceding sections manifest differently across sectors, with variation determined by regulatory environment, the composition of compressible versus non-compressible task categories, and the legacy organizational structures through which AI compression must be absorbed. This section examines six sectors in depth.

Financial services occupies a position of unusual strategic complexity in the Convergence Economy: it is simultaneously one of the most heavily exposed sectors and one of the most institutionally constrained in its capacity to absorb transformation rapidly. The exposure dimensions are broad and deep. Middle-office operations — model documentation, stress testing narrative, risk committee reporting, regulatory capital attribution — represent substantial professional headcount that is directly addressable by current-generation AI systems. Compliance functions, whose cost base has grown dramatically in the post-2008 regulatory environment, involve significant volumes of document review, regulatory interpretation, and reporting cycle management that AI can compress materially. Treasury operations, particularly in multi-entity corporate and institutional contexts, involve liquidity management, counterparty credit surveillance, and collateral optimization tasks that are ripe for AI-augmented automation. Cross-border payment operations, where the latency and cost structure of correspondent banking creates ongoing friction, face structural pressure from both AI-optimized workflow tools and from the emergence of stablecoin settlement rails that compress wire timing from days to seconds and reduce per-transaction cost by an order of magnitude.

The strategic implications for institutional incumbents are layered. In the near term, the most visible consequence is a structural shift in the composition of compliance and risk functions: fewer junior analysts performing manual document review and regulatory research, and more AI supervisors — hybrid roles defined by the capacity to design, validate, and oversee AI-generated outputs rather than produce them directly. This role transformation will compress headcount growth at the analyst and associate tier while potentially creating modest demand for a new category of technically fluent oversight professionals. More disruptively, the rise of AI-native risk and compliance boutiques — small teams of domain experts operating AI systems capable of replicating the output of much larger internal teams — will introduce genuine competitive pressure on internal functions that have historically been justified as scale-intensive. If an external provider can deliver regulatory reporting and model documentation at lower cost than an in-house team of comparable output quality, the institutional calculus for internalization weakens.

Settlement infrastructure modernization compounds this competitive dynamic. As programmable escrow mechanisms, automated settlement triggers, and stablecoin rails mature, the institutional intermediary layers that currently extract revenue from timing friction and counterparty risk management face margin compression. Treasury modernization — the ability to programmatically manage liquidity across entity structures in near real time — reduces dependence on the short-term funding and cash management services that have historically represented a stable revenue source for commercial banks and custodians. The board-level metrics that should guide strategic planning in this environment include model provider concentration ratios (measuring operational dependency on a small number of AI vendors), settlement rail diversification scores, and regulatory audit readiness indices that reflect AI-generated documentation quality under examiner scrutiny. The foundational strategic question is not whether to deploy AI but which functions remain genuinely core to internalize when external AI-augmented operators can replicate output at lower unit cost — and what organizational capabilities constitute durable competitive advantage when the labor arbitrage that previously justified internalization has eroded.

Few sectors face as direct and structurally consequential an exposure to AI-mediated compression as management consulting and professional advisory services. The traditional consulting business model is built on a specific form of labor arbitrage: experienced partners and managing directors, who command premium fees and generate institutional relationships, leverage their time through a pyramid of more junior resources — managers, senior associates, analysts — who perform the research, modeling, data assembly, and document production that constitute the bulk of deliverable hours. The economics of this model are well understood: a small number of senior professionals generate revenue at rates that are multiples of their direct cost, the pyramid structure enables the firm to absorb volume fluctuations, and client willingness to pay reflects the perceived credibility of the senior relationship as much as the intrinsic value of any individual deliverable.

AI compresses the analyst and associate layers most heavily and most immediately. The task categories that define junior consulting work — market sizing, competitive landscaping, financial benchmarking, presentation drafting, qualitative synthesis of interview notes — are precisely the categories where current-generation AI systems demonstrate high effective substitutability at acceptable output quality. A single senior professional operating with well-designed AI tooling can produce outputs that previously required a team of three to five junior resources, completing deliverable cycles in days rather than weeks. In the short run, this creates significant margin expansion for incumbent firms: the revenue model does not immediately compress because clients continue to price advisory engagements based on established brand and relationship value, while the cost base shrinks as junior hiring slows. This margin expansion phase, however, contains the seeds of a more fundamental structural disruption.

The medium-run consequences are more corrosive to the traditional model. As AI capability becomes commoditized and clients develop sophistication about what AI can deliver independently, the value proposition of a large advisory pyramid becomes harder to defend. The rise of two-to-five person AI-native advisory practices — small teams of highly experienced domain experts who use AI to produce consulting-grade output at dramatically reduced cost and timeline — creates a competitive alternative that is structurally advantaged on unit economics. These firms cannot replicate the global delivery network or brand capital of a McKinsey or BCG, but for a significant proportion of advisory mandates — particularly those where diagnostic rigor and analytical depth matter more than brand signaling or implementation scale — they represent a credible and cost-effective substitute. The billable-hour pricing model faces particular pressure: when the primary driver of fees was labor time and senior oversight of junior effort, hourly billing was a natural metric. When AI compresses the time required for analytical deliverables, hourly billing systematically underprices expertise and overprices production. The strategic pivot that preserves long-run margin in this environment is a shift from labor-leverage models to expertise-plus-brand-plus-relationship models, and from time-based to outcome-based pricing — in which the fee reflects the value of the insight or decision supported, not the hours of professional time logged.

Legal services presents a more stratified exposure profile than consulting. The profession encompasses a wide range of task categories that differ dramatically in their AI substitutability, and understanding this heterogeneity is essential to accurately characterizing the sector’s transformation trajectory. At one end of the substitutability spectrum, the tasks that define high-volume legal work in large firms and corporate departments — discovery document review, contract drafting and markup, legal research and citation, regulatory filings and compliance memoranda — are highly compressible. AI systems have already demonstrated performance in these categories that meets or exceeds junior associate quality standards on a per-document basis, at a fraction of the cost and time. The economics of discovery review in particular, a category that has historically consumed enormous associate hours in major litigation, have shifted fundamentally: firms and legal operations departments that do not deploy AI in this category are at a structural cost disadvantage relative to those that do.

At the other end of the substitutability spectrum, the tasks that define the most remunerative legal work — complex commercial negotiation, courtroom advocacy, jury persuasion, appellate strategy, and the exercise of judgment in novel regulatory or transactional contexts — retain a high degree of human non-substitutability. These tasks require not merely the retrieval and synthesis of legal information but the contextual application of strategic judgment, the reading of human dynamics, and the authority that derives from professional credentialing and personal reputation. AI augments the preparation for these activities significantly; it does not replicate the activities themselves.

The structural consequence of this asymmetry is a bifurcation of the legal services market. Small AI-augmented practices staffed by experienced attorneys operating with advanced legal AI tooling will increasingly dominate the market for routine documentation, contract review, compliance advisory, and high-volume transactional work — winning on cost efficiency and turnaround speed. Large firms will retain competitive advantage in complex litigation, major M&A advisory, regulatory crisis management, and practice areas where the institutional brand and team depth of a global firm represent a material client benefit. The segment facing the most acute strategic pressure is the mid-tier firm: large enough to carry the overhead of a multi-partner structure but insufficiently specialized or branded to compete with either the cost efficiency of AI-augmented boutiques or the institutional prestige and scale of global firms. Regulatory adaptation in the legal sector will lag behind technical capability, as bar associations, court systems, and professional responsibility frameworks grapple with disclosure obligations, unauthorized practice rules, and malpractice standards applicable to AI-generated legal work — creating a period of regulatory uncertainty that may paradoxically protect incumbent cost structures while the governance architecture catches up.

The media and marketing sector confronts AI-driven compression with a combination of acute short-run disruption and a more durable long-run competitive restructuring that actually rewards a different kind of asset than the one currently most valued. The compression dynamics are acute and already manifest: the cost of generating competent written content, graphic design assets, advertising copy variations, video scripts, and social media material has fallen by an order of magnitude with the deployment of current-generation AI tools. Marketing teams that previously required large content production workforces to maintain publishing cadences across multiple channels can now achieve equivalent output volume with dramatically reduced headcount. This is not speculative — the contraction in content marketing employment observable in the 2023–2025 period reflects this dynamic directly.

The more interesting strategic question is what the cost compression of content production reveals about the underlying value architecture of media and marketing. When production capacity was scarce and expensive, having a large creative team was a competitive moat. When production capacity is abundant and cheap, the binding constraint shifts upstream to the inputs that AI cannot commoditize: narrative originality, brand voice authority, audience trust, and distribution reach. The creative differentiation that matters in an AI-saturated content environment is not the ability to produce at scale — any organization can do that — but the capacity to produce content that carries genuine epistemic or emotional authority with a specific audience. Independent creators who have built authentic relationships with defined communities are, paradoxically, better positioned in this environment than large content factories whose competitive advantage was volume production, because the scarcity they represent — genuine human perspective and earned trust — is precisely what AI abundance makes more valuable in contrast.